05 - Process Scheduling

Problem 1: Scheduler Differences

Section titled “Problem 1: Scheduler Differences”Briefly describe the difference between the long-term scheduler and short-term (CPU) scheduler.

Instructor Solution

The long-term scheduler (or job scheduler) determines which programs are admitted into the system for processing. It selects processes from a “job pool” on the disk and loads them into memory, thereby controlling the degree of multiprogramming (the number of processes in memory).

The short-term scheduler (or CPU scheduler) is much faster and more frequent. It selects which process, from those already residing in memory and in the “ready” state, should be executed next by the CPU. Its primary goal is to optimize system performance based on criteria like throughput and latency.

Elaboration (by LLM)

The Two-Tier Scheduling Model:

Modern operating systems manage process execution at two distinct levels. Think of it like a restaurant: the long-term scheduler is the host deciding how many customers (processes) can be seated (loaded into memory) at once, while the short-term scheduler is the waiter deciding which seated customer to serve next.

Long-Term Scheduler (Job Scheduler):

- Runs infrequently — may be invoked only when a process terminates or memory becomes available

- Controls degree of multiprogramming: how many processes reside in memory simultaneously

- Pulls jobs from a disk-resident job pool (batch systems) or admits newly submitted jobs

- Balancing I/O-bound vs. CPU-bound processes here leads to better overall utilization

Short-Term Scheduler (CPU Scheduler):

- Runs very frequently — potentially hundreds of times per second

- Selects the next ready process to receive CPU time from the in-memory ready queue

- Must be extremely fast; even a 10 ms scheduling decision on a 100 ms quantum = 10% overhead

- Uses algorithms like FCFS, SJF, Priority, or Round Robin

Comparison Table:

| Property | Long-Term Scheduler | Short-Term Scheduler |

|---|---|---|

| Also called | Job scheduler | CPU scheduler |

| Frequency | Infrequent (seconds to minutes) | Very frequent (milliseconds) |

| Pool it selects from | Disk (job pool) | Memory (ready queue) |

| What it controls | Degree of multiprogramming | Which process gets the CPU |

| Speed requirement | Slow is acceptable | Must be very fast |

Problem 2: Context Switch Events

Section titled “Problem 2: Context Switch Events”List 4 events that might occur to cause the kernel to context switch from one user process to another.

Instructor Solution

A kernel will trigger a context switch from one user process to another when:

- I/O Completion: The I/O request of a process in Waiting (Blocked) state completes and the process moves from the Blocked state to the Ready state.

- I/O Request: A process makes an I/O request for a slow resource (like reading from a disk), causing it to move from a Running to a Blocked state.

- Time-Slice Expiration: In preemptive multitasking, the CPU scheduler decides the process has used its allotted time (quantum) and forces a switch.

- New Process Arrival: A new process with a higher priority arrives and the OS decides to stop the currently executing process and schedule the newly arrived process.

- Process Termination: The current process finishes its execution or crashes, requiring the kernel to select a new process to run.

Elaboration (by LLM)

What Is a Context Switch?

A context switch is the mechanism by which the kernel saves the current process’s execution state (registers, PC, stack pointer, etc.) and loads a previously saved state of another process — effectively pausing one and resuming another. It is the fundamental mechanism enabling multitasking on a single CPU.

The 5-State Process Model:

Understanding context switch triggers requires knowing the states a process can occupy:

admit New ──────────────► Ready ◄────────────── Blocked │ I/O done ▲ scheduler │ │ dispatch ▼ │ Running ───────────── Blocked │ I/O request │ ▼ TerminatedBreaking Down Each Trigger:

- I/O Request — Process voluntarily leaves Running → Blocked. The CPU would otherwise sit idle, so the scheduler immediately picks the next Ready process.

- I/O Completion — An interrupt fires (hardware signals the CPU). The kernel moves the waiting process from Blocked → Ready. If its priority is high enough, it may preempt the current process right away.

- Time-Slice Expiration — A timer interrupt fires at the end of the quantum. The kernel’s preemptive scheduler forces the current process from Running → Ready and selects another.

- Higher-Priority Arrival — A

fork()orexec()creates a high-priority process. Under preemptive priority scheduling, it may immediately displace the running process. - Process Termination — The process calls

exit()or crashes. With no process to continue, the scheduler must select a new one; there is no “current state” to save.

Problem 3: Preemptive vs. Non-Preemptive Scheduling

Section titled “Problem 3: Preemptive vs. Non-Preemptive Scheduling”What’s the difference between preemptive and non-preemptive scheduling? Briefly explain. Which one is more suitable for a timesharing system?

Instructor Solution

In non-preemptive scheduling, a process keeps the CPU until it either terminates or voluntarily switches to a waiting state (e.g., for I/O). The kernel cannot force it to stop. In preemptive scheduling, the kernel can interrupt a running process at any time to give the CPU to another process, usually based on a timer interrupt or the completion of an I/O request for process with a higher priority.

Preemptive scheduling is more suitable for a timesharing system. This is because timesharing requires the OS to rapidly cycle through multiple users/processes to ensure that everyone receives a responsive experience, which is only possible if the OS can force processes to yield the CPU after their time slice (quantum) expires.

Elaboration (by LLM)

Non-Preemptive Scheduling — “Cooperative”:

A process holds the CPU until one of two things happens:

-

- It voluntarily yields (e.g., blocks on I/O)

- It terminates

The OS has no mechanism to forcibly reclaim the CPU. This is sometimes called cooperative multitasking — every process must “cooperate” for the system to remain responsive.

Preemptive Scheduling:

The OS retains control via a hardware timer interrupt. When the interrupt fires, the kernel can inspect priorities, check for expired quanta, and forcibly evict the current process from the CPU. No cooperation from the running process is needed.

Side-by-Side Comparison:

Non-Preemptive

- Process runs until it blocks or exits

- No timer interrupts needed for scheduling

- Simple to implement; fewer race conditions

- Poor responsiveness for interactive users

- One misbehaving process can starve all others

- Example algorithms: FCFS, non-preemptive SJF

Preemptive

- OS can interrupt process at any time

- Requires timer hardware + interrupt handling

- More complex; needs careful synchronization

- Excellent responsiveness for interactive use

- Fair CPU distribution across processes

- Example algorithms: Round Robin, preemptive Priority

Why Timesharing Requires Preemption:

In a timesharing system (e.g., a Unix shell with multiple users), the illusion of simultaneity only works if the OS can take the CPU away from any process on a schedule. If a user runs an infinite loop, non-preemptive scheduling would freeze every other user’s terminal — preemption is what prevents that.

Problem 4: Context Switch State Preservation

Section titled “Problem 4: Context Switch State Preservation”What state is saved on a context switch between threads of the same process?

Instructor Solution

When the kernel performs a context switch between threads of the same process, it must save the Thread Context, which consists of:

- Program Counter (PC): The memory address of the next instruction to execute.

- CPU Registers: The current state of general-purpose and floating-point registers.

- Stack Pointer (SP): The location of the thread’s private stack (containing local variables and function calls).

Crucially, because threads share the same address space, the kernel does not need to save or reload memory management information like page tables or the heap.

Elaboration (by LLM)

Saved vs. Shared Resources:

The key insight is that threads within the same process share most resources. A context switch between two threads of the same process is therefore much cheaper than a switch between two different processes.

| Resource | Saved on Thread Switch? | Reason |

|---|---|---|

| Program Counter (PC) | ✅ Yes | Each thread has its own point of execution |

| CPU Registers (GP, FP) | ✅ Yes | Each thread’s computation state is independent |

| Stack Pointer (SP) | ✅ Yes | Each thread has its own private call stack |

| Page tables / address space | ❌ No | All threads share the same virtual memory |

| Heap | ❌ No | Shared among all threads of the process |

| File descriptor table | ❌ No | Shared among all threads of the process |

| Signal handlers | ❌ No | Process-level resource |

The Thread Control Block (TCB):

The kernel stores the saved thread state in a Thread Control Block. When the thread is rescheduled, the kernel restores these values from the TCB back into the hardware registers.

TCB for Thread T1┌─────────────────────┐│ PC = 0x401234 │ ← Resume here│ SP = 0x7fff8a00 │ ← Thread's own stack│ R0 = 42 ││ R1 = 0x5001 ││ ... ││ FP regs │└─────────────────────┘Why Is This Cheaper Than a Process Switch?

A process context switch additionally requires:

- Saving/restoring the entire page table base register (CR3 on x86)

- TLB flush — invalidating all cached virtual-to-physical address mappings

- Potentially reloading CPU caches since a new address space is loaded

Since a thread switch stays within the same address space, none of that is needed. The TLB and caches remain warm, which is a significant performance advantage.

Problem 5: Round Robin Time Quantum

Section titled “Problem 5: Round Robin Time Quantum”Selecting a proper time quantum is important in round robin scheduling. What happens if the quantum is too big? What happens if it is too small? Briefly explain.

Instructor Solution

In Round Robin scheduling, the time quantum determines the system’s balance between efficiency and responsiveness:

- If the quantum is too big: The algorithm behaves like First-Come, First-Served (FCFS). Long processes will monopolize the CPU, causing “convoy effects” where short, interactive tasks must wait a long time, leading to poor responsiveness in a multi-user or UI-driven system.

- If the quantum is too small: The system suffers from excessive overhead. Because the CPU spends a higher percentage of its time performing context switches (saving and loading process states) rather than executing actual instructions, the overall system throughput drops significantly.

Elaboration (by LLM)

The Quantum as a Dial:

Think of the time quantum as a dial with two failure modes at the extremes and a “sweet spot” in the middle:

Short q ◄─────────────────────────────────► Long q (too small) [sweet spot] (too big)

High overhead Behaves like FCFS Low throughput Poor interactivity Great responsiveness Long wait timesQuantifying Overhead:

If a context switch costs ms and the quantum is ms, the fraction of time wasted on overhead is:

For example, if and , then of CPU time is overhead — already quite high. Increasing to drops this to .

The FCFS Degeneration:

When , Round Robin degenerates into First-Come, First-Served:

- The first process in the queue runs to completion before any other runs

- Short jobs stuck behind long jobs experience the convoy effect: a long CPU-bound job forces all subsequent jobs to wait, just like slow trucks on a highway

Practical Guidance:

In modern systems, time quanta are typically chosen so that:

- The quantum is longer than 80% of CPU bursts (so most processes complete before being preempted)

- A typical value is 10–100 ms on general-purpose systems

Problem 6: Starvation in Scheduling

Section titled “Problem 6: Starvation in Scheduling”What’s starvation as it applies to process scheduling? In which scheduling discipline is it more likely to occur? Give an example.

Instructor Solution

Starvation occurs when a process is perpetually denied CPU time because higher-priority processes are constantly being scheduled ahead of it. The process remains in the “Ready” state indefinitely, even though it is technically capable of running. It is most likely to occur in Priority Scheduling (specifically Fixed-Priority) and Shortest Job First (SJF).

Example: In a system that always executes the shortest task first, a very long process might never run if new, short processes continue to arrive every few seconds. The long process “starves” while the CPU handles a never-ending stream of quick tasks or tasks with higher priority.

Elaboration (by LLM)

Starvation Defined:

Starvation is a liveness failure — a process is live (not deadlocked, not terminated) but makes no forward progress because it is perpetually deprioritized. It is distinct from deadlock: a starved process could run if given the CPU; it simply never gets it.

Where Starvation Arises:

- Fixed-Priority Scheduling — Low-priority processes are only scheduled when no higher-priority process is ready. If high-priority processes arrive continuously, low-priority ones never run.

- Shortest Job First (SJF) / Shortest Remaining Time First (SRTF) — A very long job may wait indefinitely as shorter jobs continuously arrive and cut in line.

- Round Robin — Starvation is largely prevented because every process gets a turn, regardless of length or priority. This is one of RR’s key advantages.

Visualizing Starvation in SJF:

Time ──────────────────────────────────────────────► J1(10ms) J2(2ms) J3(1ms) J4(3ms) ↑ ↑ ↑ New short jobs keep arriving...

Long job L (100ms) is admitted at t=0 but never runs becauseshorter jobs continuously arrive and are always selected first.The Solution: Aging

The standard remedy for starvation is aging — periodically increasing the priority of processes that have been waiting a long time. Eventually the starved process reaches the highest priority level and gets scheduled.

where is an aging coefficient. Once exceeds all competing processes, will be scheduled.

Problem 7: Round Robin for Batch Jobs

Section titled “Problem 7: Round Robin for Batch Jobs”A friend of yours is to design an OS that would run batch jobs, and s/he decides to employ Round Robin Scheduling algorithm. Is your friend making a good choice. Why or why not?

Instructor Solution

No, Round Robin is generally a poor choice for a pure batch system. Batch jobs are typically long-running, non-interactive tasks where the primary goal is maximum throughput and efficiency. Round Robin introduces frequent context-switching overhead and interrupts long-running processes unnecessarily. For batch processing, First-Come, First-Served (FCFS) or Shortest Job First (SJF) are better because they minimize overhead and complete individual jobs faster.

Elaboration (by LLM)

Understanding the Mismatch:

Different workloads have different scheduling needs. The error here is applying a scheduling algorithm designed for one workload type (interactive/timesharing) to a fundamentally different one (batch).

Characteristics of Batch Workloads:

- Jobs are long-running (minutes to hours — e.g., data processing, scientific simulations)

- Jobs are non-interactive — no user is waiting for quick feedback

- Primary metrics are throughput (jobs/hour) and turnaround time (wall-clock time per job)

- Fairness and response time are largely irrelevant — no human is sitting at a terminal

Why Round Robin Hurts Batch Jobs:

Round Robin was designed to give each process a frequent turn so that interactive users don’t notice delays. For batch jobs:

- Context switches happen constantly, even though no user needs a response

- Each switch wastes CPU time saving/restoring state that could be used running the job

- Turnaround time for each job is stretched out because every job is constantly interrupted

Better Alternatives for Batch Systems:

| Algorithm | Why It Fits Batch |

|---|---|

| FCFS | Zero preemption overhead; simple and predictable |

| SJF (non-preemptive) | Minimizes average turnaround time across all jobs |

| Priority (non-preemptive) | Lets critical jobs finish first without unnecessary interruption |

Analogy:

Imagine a laundry service. Batch scheduling is like washing one load completely before starting the next (FCFS) — efficient and simple. Round Robin would be like running every machine for 10 minutes, then rotating — the machines waste time stopping and starting, and no load finishes quickly.

Problem 8: Ready to Waiting State Transition

Section titled “Problem 8: Ready to Waiting State Transition”Is it possible for a process to move from the Ready state to the Waiting (blocked) state? Why or why not? Briefly justify your answer.

Instructor Solution

No, a process cannot move directly from the Ready state to the Waiting (Blocked) state. Justification: A process only enters the Waiting state when it requests a resource (like an I/O operation) that isn’t immediately available. To make such a request, the process must execute a system call, which requires the CPU. Since a process in the Ready state is not currently executing on the CPU, it cannot perform the actions necessary to block itself. It must first be scheduled to the Running state.

Elaboration (by LLM)

The Process State Diagram:

The valid transitions in the classic 5-state model are strictly defined:

┌───────────────────────────────┐ │ admit │ New ─────────► Ready ◄──────────────── Blocked │ │ ▲ │ scheduler │ │ │ dispatch ▼ I/O req │ │ Running ─────────────────► Blocked │ │ │ └──────────────────────────────► Terminated exit / crashNotice: there is no direct arrow from Ready → Blocked. This is not an arbitrary design choice — it follows directly from how blocking works.

Why the Transition Is Impossible:

Blocking requires executing code. Specifically, to block on I/O:

-

- The process must call a system call (e.g.,

read(),write(),recv()) - The kernel handles the call and determines the resource is unavailable

- The kernel marks the process as Blocked and moves it off the ready queue

Step (a) requires the process to be executing on the CPU. A Ready process has not been dispatched to the CPU yet — it has no opportunity to call read() or any other blocking system call. Therefore, it cannot transition to Blocked without first going through Running.

Informal Analogy:

A Ready process is like a customer standing in line at a checkout counter. A Blocked process is like a customer who stepped aside because they forgot something. You can’t “step aside” to go get something if you haven’t reached the counter yet — you first need to be served (Running), then choose to pause (block).

Problem 9: Round-Robin Queue Placement

Section titled “Problem 9: Round-Robin Queue Placement”In Round-Robin scheduling, new processes are placed at the end of the queue, rather than at the beginning. Suggest a reason for this.

Instructor Solution

This design ensures Fairness and prevents Starvation. By placing new processes at the end of the queue, the system follows a First-In-First-Out (FIFO) logic that guarantees every process already in the “Ready” state gets its allotted time slice before a newcomer can “cut the line.” If new processes were placed at the beginning, a constant stream of incoming tasks could indefinitely postpone the execution of existing ones leading to starvation.

Elaboration (by LLM)

Visualizing Queue Placement:

Consider a system with processes P1, P2, P3 already in the ready queue, and a new process P4 arriving.

End-of-queue insertion (correct)

Before P4 arrives:[P1, P2, P3] ← CPU serves P1 next

After P4 arrives:[P1, P2, P3, P4]

Order served: P1 → P2 → P3 → P4 → ...All existing processes run before P4.Front-of-queue insertion (problematic)

Before P4 arrives:[P1, P2, P3] ← CPU serves P1 next

After P4 arrives:[P4, P1, P2, P3] ← P4 cuts line!

If new processes keep arriving, P1, P2, P3may never run → starvation.The FIFO Property:

End-of-queue insertion gives Round Robin a FIFO ordering for when processes are first served. Combined with the circular nature of the queue (each process returns to the end after using its quantum), this ensures:

- Every process already waiting is served before any newcomer

- No process can be indefinitely blocked by a stream of arrivals

- The system provides a bounded wait time: with processes and quantum , the worst-case wait before the next turn is

Connection to Starvation Prevention:

This is directly related to Problem 6. Front-of-queue insertion would reintroduce the same starvation risk that Round Robin was designed to avoid: a continuous stream of new arrivals at the front could block existing processes indefinitely.

Problem 10: Round-Robin Process Bias

Section titled “Problem 10: Round-Robin Process Bias”Explain why Round-Robin scheduling tends to favor CPU bound processes over I/O bound ones.

Instructor Solution

Round-Robin favors CPU-bound processes because they typically use their entire time quantum, allowing them to maximize their CPU occupancy and stay at the front of the scheduler’s mind once their turn returns. I/O-bound processes, conversely, often execute for only a fraction of their quantum before blocking for I/O. When they finish their I/O and return to the Ready queue, they are placed at the back of the line, effectively “losing” the remainder of their unused time. This results in I/O-bound processes getting significantly less total CPU time relative to their needs compared to CPU-heavy tasks.

Elaboration (by LLM)

CPU-Bound vs. I/O-Bound Processes:

| Property | CPU-Bound | I/O-Bound |

|---|---|---|

| Typical behavior | Long CPU bursts, rare I/O | Short CPU bursts, frequent I/O |

| Examples | Video encoding, matrix multiplication | Web server, text editor, database queries |

| Quantum usage | Uses full quantum | Blocks before quantum expires |

| Return position after unblocking | End of queue | End of queue |

The Bias Explained Step by Step:

Suppose quantum and the ready queue has CPU-bound process C and I/O-bound process I.

Round 1: C runs for 10ms (uses full quantum) → returns to end of queue I runs for 2ms → blocks for I/O (8ms of quantum unused)

While I is blocked: C runs again. And again. And again. C accumulates many full quanta while I waits on I/O.

Round N (I/O finishes): I re-enters at the END of the ready queue. But C has been running the whole time I was blocked.Quantifying the Unfairness:

Let = I/O-bound CPU burst = 2 ms, = 10 ms quantum, = I/O wait = 8 ms.

- I/O-bound process gets of CPU every many ms of wall time

- CPU-bound process gets of CPU every ms of wall time

The CPU-bound process receives more CPU time per cycle.

Practical Consequences:

- I/O-bound processes (interactive apps, servers) feel sluggish under heavy CPU-bound load

- The system’s I/O devices may sit idle while the CPU churns on compute-heavy tasks

- Overall system throughput suffers: I/O and CPU should ideally be used in parallel

Mitigation Strategies:

- Prioritize I/O-bound processes: Boost their priority after they unblock so they rejoin the queue at a higher priority or closer to the front

- Multi-level feedback queues (MLFQ): Reward processes that don’t use their full quantum (implicitly favoring I/O-bound processes) and penalize those that do

- Smaller quantum: Reduces the gap, but increases context switch overhead

Problem 11: CPU Scheduling Quanta

Section titled “Problem 11: CPU Scheduling Quanta”CPU scheduling quanta have remained about the same over the past 20 years, but processors are now over 1,000 times faster. Why has CPU scheduling quanta NOT changed?

Instructor Solution

The reason CPU scheduling quanta have remained largely unchanged (typically between 10ms and 100ms) despite processors becoming 1,000 times faster is that the quantum is determined by human perception, not processor speed. A quantum is designed to be short enough to create the illusion of concurrency for a human user—making the system feel “responsive”—but long enough so that the context switch overhead (the time spent saving/loading process states) remains a negligible fraction of the total execution time. While a modern CPU can execute many more instructions within that 100ms window than a CPU from 20 years ago, reducing the quantum further would:

- Not improve the user experience, as humans cannot perceive sub-millisecond responsiveness.

- Increase overhead, because the time required to perform a context switch (governed by memory and bus speeds) has not decreased as dramatically as raw CPU calculation speed.

Elaboration (by LLM)

Why the Quantum Exists at All:

A scheduling quantum (also called a time slice) is the maximum duration a process is allowed to run before the OS forcibly preempts it and selects the next process. It exists to share a single CPU fairly among many processes, giving each one a “turn.”

The Two Competing Constraints:

The quantum must satisfy two opposing requirements simultaneously:

- Short enough to feel responsive: If the quantum were 1 second, a user clicking a button might wait a full second before the UI process even gets a turn. Humans notice latency above roughly 100ms.

- Long enough to be efficient: Every quantum boundary triggers a context switch — saving registers, program counters, and memory maps, then loading those of the next process. That switch takes on the order of microseconds to low milliseconds. If the quantum were 1ms and context switches take 0.1ms, you’d waste ~10% of CPU time just switching.

Why Faster CPUs Don’t Change This:

The quantum is calibrated against human perception thresholds, which are biological constants — not hardware specs. A 100ms quantum felt responsive in 1995, and it still feels responsive today. Making it 10x shorter (to 10ms) would provide no perceivable UX benefit while increasing context switch overhead as a proportion of useful work.

The Asymmetry in Hardware Improvements:

| Factor | Improvement Since ~2005 |

|---|---|

| CPU compute speed | ~1,000× faster |

| Memory/bus latency (governs context switch cost) | ~2–5× faster |

| Human perception threshold | Unchanged |

Context switch cost is dominated by cache invalidation and memory operations, which have improved far more slowly than raw compute. So even if you wanted to shrink the quantum proportionally to CPU speed, the overhead fraction would grow, not shrink.

Problem 12: Shortest Job First

Section titled “Problem 12: Shortest Job First”Briefly explain how the shortest job first scheduling works.

Instructor Solution

Shortest Job First (SJF) scheduling selects the process with the smallest next CPU burst length from the Ready Queue to execute next. If two processes have the same burst length, First-Come, First-Served (FCFS) is typically used to break the tie. SJF is mathematically optimal for minimizing the average waiting time for a given set of processes because it moves short jobs to the front, clearing them out of the system quickly. There are two versions:

- Non-preemptive: Once a process starts, it holds the CPU until it finishes its burst.

- Preemptive (Shortest Remaining Time First): If a new process arrives with a shorter burst than the remaining time of the current process, the CPU is switched to the new process

Elaboration (by LLM)

Core Idea — The Scheduling Decision:

At each scheduling decision point, SJF looks at all processes in the Ready Queue and picks the one whose next CPU burst is the shortest. Think of it like a checkout line at a grocery store — you let the person with the fewest items go first to minimize everyone’s total waiting.

Why SJF Minimizes Average Waiting Time:

Imagine three processes with burst times of 1, 5, and 10 units. Compare two orderings:

SJF Order: 1 → 5 → 10

- P(1) waits: 0

- P(5) waits: 1

- P(10) waits: 6

- Average: (0+1+6)/3 = 2.33

Reversed Order: 10 → 5 → 1

- P(10) waits: 0

- P(5) waits: 10

- P(1) waits: 15

- Average: (0+10+15)/3 = 8.33

Running shorter jobs first “unblocks” them quickly and reduces how long they contribute to other processes’ waiting time.

Non-Preemptive vs. Preemptive SJF:

| Property | Non-Preemptive SJF | Preemptive SJF (SRTF) |

|---|---|---|

| When decision is made | Only when CPU becomes free | On every new arrival |

| Can interrupt running process? | No | Yes |

| Optimal for? | Batch systems | Interactive/dynamic systems |

| Implementation complexity | Lower | Higher |

The Practical Problem — Predicting Burst Times:

SJF requires knowing the next CPU burst length in advance — information the OS doesn’t have. In practice, the OS estimates burst times using an exponential average (also called aging):

Where is the actual most recent burst, is the previous estimate, and is a weighting factor. This makes SJF feasible in real systems by learning from past behavior.

Problem 13: Multi-level Feedback Queuing

Section titled “Problem 13: Multi-level Feedback Queuing”Briefly explain multi-level feedback queuing scheduling algorithm.

Instructor Solution

Multilevel Feedback Queue (MLFQ) scheduling uses multiple queues with different priority levels to categorize processes based on their CPU-burst behavior. New processes enter the highest-priority queue and are granted a short time quantum. If a process uses its entire quantum (CPU-bound), it is demoted to a lower-priority queue with a longer quantum. If a process blocks for I/O (I/O-bound), it stays at or moves to a higher-priority queue. This dynamically separates interactive tasks from background batch jobs, optimizing both responsiveness and throughput.

Elaboration (by LLM)

The Motivation — Unknown Burst Times:

SJF is optimal but needs burst times in advance. MLFQ sidesteps this by observing process behavior over time and adjusting priorities dynamically. It’s a self-tuning scheduler.

Structure of the Queue Hierarchy:

A typical MLFQ has 3+ queues, each with increasing quantum size and decreasing priority:

Queue 0 (Highest Priority) │ Quantum = 8ms │ ← New arrivals start hereQueue 1 (Medium Priority) │ Quantum = 16ms │Queue 2 (Lowest Priority) │ Quantum = 32ms │ ← CPU-bound jobs sink here (FCFS at bottom, sometimes)The OS always runs processes from the highest non-empty queue.

The Promotion/Demotion Rules:

- Demotion: A process that uses its full quantum without blocking is inferred to be CPU-bound → move it down one queue.

- No demotion (or promotion): A process that blocks before its quantum expires is inferred to be I/O-bound or interactive → keep it at the current level or promote it.

This means interactive processes (which frequently block waiting for keyboard input, disk reads, etc.) naturally float to the top, getting fast response times. Long-running batch jobs slowly sink to the bottom where they run with larger quanta (more efficient for them) at lower priority.

Illustrative Flow:

New Process → [Q0, 8ms quantum] │ ├─ Blocks for I/O before 8ms? → Stays in Q0 (interactive) │ └─ Uses full 8ms? → Demoted to [Q1, 16ms quantum] │ ├─ Blocks for I/O? → Stays in Q1 │ └─ Uses full 16ms? → Demoted to [Q2, 32ms quantum] (runs as batch/background job)Starvation Prevention via Aging:

If a process sits in a low-priority queue for too long without running, many MLFQ implementations periodically boost all processes back to the top queue. This prevents indefinite starvation of CPU-bound jobs.

| Process Type | Behavior | MLFQ Effect |

|---|---|---|

| Interactive (keyboard/UI) | Blocks frequently | Stays high-priority, fast response |

| I/O-bound (disk reads) | Blocks often | Stays high-priority |

| CPU-bound (computation) | Rarely blocks | Sinks to low-priority, large quantum |

Problem 14: Optimal Scheduling for Maximum Throughput

Section titled “Problem 14: Optimal Scheduling for Maximum Throughput”Consider a set of “n” tasks with known burst times to be run on a uniprocessor machine. Which scheduling algorithm will result in the maximum throughput, i.e., minimum average turnaround time. Justify your answer.

Instructor Solution

The algorithm that results in the minimum average turnaround time (and thus high throughput) is Shortest Job First (SJF). Average turnaround time is minimized when the shortest tasks are completed first. By scheduling the smallest burst time at the earliest possible moment, we ensure that the waiting time for all subsequent processes is kept to a minimum. Mathematically, this moves the “waiting” cost of long jobs to the end of the schedule, preventing them from delaying a large number of smaller tasks.

Elaboration (by LLM)

Turnaround Time vs. Throughput — Connecting the Definitions:

- Turnaround time for a process = Finish Time − Arrival Time

- Average turnaround time = mean of turnaround times across all processes

- Throughput = number of processes completed per unit time

Minimizing average turnaround time is equivalent to maximizing throughput because completing jobs faster increases the rate at which the system clears work.

Mathematical Justification for SJF:

Assume all jobs arrive at time 0 with burst times (sorted in ascending order). The finish time of job in this order is:

The average turnaround time (equal to average finish time when all arrive at time 0) is:

Each job appears in the finish-time sum for every job scheduled after it (including itself). So job contributes to the total. To minimize this sum, we should pair the largest coefficient with the smallest — that is, sort jobs in ascending order of burst time.

Intuition with a Small Example:

Three jobs: burst times 2, 4, 8. Compare SJF vs. longest-job-first:

SJF: 2 → 4 → 8

- , ,

LJF: 8 → 4 → 2

- , ,

SJF yields a 35% reduction in average turnaround time here. The long job’s cost is “amortized” over fewer other processes when placed last.

Problem 15: SRTF and Starvation

Section titled “Problem 15: SRTF and Starvation”True/False: Shortest Remaining Time First scheduling may cause starvation. Justify your answer.

Instructor Solution

True. Shortest Remaining Time First (SRTF) is the preemptive version of SJF. If a steady stream of new processes with very short burst times keeps arriving, a process with a long burst time will be constantly preempted or bypassed. It will remain in the Ready queue indefinitely, unable to finish its execution, which is the definition of starvation.

Elaboration (by LLM)

What is Starvation?

Starvation occurs when a process is indefinitely denied access to the CPU even though it is in the Ready queue. It isn’t blocked (waiting for I/O) — it’s ready to run, but the scheduler keeps choosing other processes.

How SRTF Causes Starvation — A Concrete Scenario:

t=0: P_long arrives, burst = 100 units. Starts running.t=1: P_short1 arrives, burst = 2 units. Preempts P_long (2 < 99 remaining).t=3: P_short2 arrives, burst = 2 units. Preempts P_long (2 < 99 remaining).t=5: P_short3 arrives, burst = 2 units. Preempts P_long again.... (this continues indefinitely)As long as short jobs keep arriving at a rate faster than they can be serviced, P_long never gets CPU time. Its remaining burst never decreases, so it is always deprioritized.

Starvation vs. Aging as a Fix:

The standard remedy is aging — gradually increasing the effective priority (or decreasing the effective remaining time) of a process that has been waiting. After waiting units, its priority is boosted so that eventually it becomes the shortest “effective” remaining time in the queue.

| Property | SRTF | SRTF with Aging |

|---|---|---|

| Optimal avg. turnaround | Yes | Approximately |

| Starvation-free | No | Yes |

| Implementation complexity | Low | Medium |

Problem 16: Preemptive Priority and Starvation

Section titled “Problem 16: Preemptive Priority and Starvation”True/False: Preemptive Priority scheduling may cause starvation. Justify your answer.

Instructor Solution

True. In Preemptive Priority scheduling, the kernel always grants the CPU to the process with the highest priority. If the system is constantly flooded with high-priority tasks, a low-priority process will be repeatedly preempted or bypassed. It may sit in the Ready queue indefinitely, never receiving enough CPU time to complete.

Elaboration (by LLM)

How Priority Scheduling Works:

Each process is assigned a numeric priority (convention varies — sometimes lower number = higher priority, sometimes higher number = higher priority). The CPU always runs the highest-priority ready process. If a new higher-priority process arrives while a lower-priority one is running, the CPU is immediately preempted.

The Starvation Mechanism:

Consider a system where:

- High-priority processes (priority 1) arrive continuously

- A low-priority process (priority 10) is in the ready queue

The scheduler will always find a priority-1 process to run. The priority-10 process waits — possibly forever.

Real-World Example:

This is precisely what happened on early IBM 7094 systems in the 1960s. A low-priority batch job submitted in 1967 was found still waiting in the queue when the machine was finally shut down in 1973 — it had never run.

SRTF vs. Priority Starvation — Similar Root Cause:

SRTF Starvation

Starves processes with long remaining burst times because short-burst arrivals always win.

Priority Starvation

Starves processes with low priority values because high-priority arrivals always win.

Both share the same structural flaw: a single dimension (remaining time or priority) is used to make decisions, and a process that scores poorly on that dimension may never improve its standing.

The Fix — Aging:

Priority aging gradually increases the priority of a waiting process over time:

This ensures that even the lowest-priority process will eventually become the highest-priority process if it waits long enough, guaranteeing eventual CPU access.

Problem 17: Round Robin vs. FCFS Response Time

Section titled “Problem 17: Round Robin vs. FCFS Response Time”True/False: Round Robin scheduling is better than FCFS in terms of response time. Justify your answer.

Instructor Solution

True. Round Robin is designed specifically to improve response time by limiting how long any single process can hold the CPU. In FCFS, a short process can be stuck behind a very long one (the “convoy effect”), leading to long delays. Round Robin ensures that every process gets a turn within a fixed time window, providing much faster initial feedback to users.

Elaboration (by LLM)

Response Time vs. Turnaround Time — An Important Distinction:

- Response time: Time from process arrival until it first gets CPU time (first response)

- Turnaround time: Time from arrival until the process fully completes

Round Robin optimizes response time. It does not minimize turnaround time (SJF does that). Understanding which metric matters for your use case is key.

The Convoy Effect in FCFS:

FCFS runs each process to completion before moving on. If a long process arrives first:

FCFS with processes: P_long(burst=50), P_short1(burst=1), P_short2(burst=1)

Timeline: [P_long: 0..50] [P_short1: 50..51] [P_short2: 51..52]

Response times: P_long=0, P_short1=50, P_short2=51Average response time = (0 + 50 + 51) / 3 = 33.7P_short1 and P_short2 are “stuck behind the convoy” of the long process.

Round Robin Breaks the Convoy:

RR (quantum=5) with same processes:

Timeline: [P_long: 0..5] [P_short1: 5..6] [P_short2: 6..7] [P_long: 7..52]

Response times: P_long=0, P_short1=5, P_short2=6Average response time = (0 + 5 + 6) / 3 = 3.7The short processes get their first CPU time within one quantum of the long process starting.

The Tradeoff — Turnaround Time:

Round Robin worsens average turnaround time compared to SJF, because it interleaves execution rather than finishing short jobs first:

| Metric | FCFS | Round Robin | SJF |

|---|---|---|---|

| Response time | Poor (convoy effect) | Good | Good |

| Turnaround time | Moderate | Poor (interleaving) | Optimal |

| Starvation-free | Yes | Yes | No |

| Implementation | Trivial | Simple | Moderate |

Quantum Size Matters:

- If quantum → ∞, Round Robin degenerates to FCFS

- If quantum → 0, Round Robin becomes processor sharing (ideal, but context switch overhead dominates)

- Optimal quantum: large enough to amortize context switch cost, small enough to ensure responsiveness

Problem 18: FCFS Gantt Chart with 5 Processes

Section titled “Problem 18: FCFS Gantt Chart with 5 Processes”Consider the following 5 processes in a system.

| Process | Arrival Time | Burst Time |

|---|---|---|

| P1 | 4 | 5 |

| P2 | 6 | 4 |

| P3 | 0 | 3 |

| P4 | 6 | 2 |

| P5 | 5 | 4 |

Draw a Gantt chart illustrating the execution of these processes using FCFS. Compute the average waiting time and average turnaround time. What’s the CPU utilization?

Instructor Solution

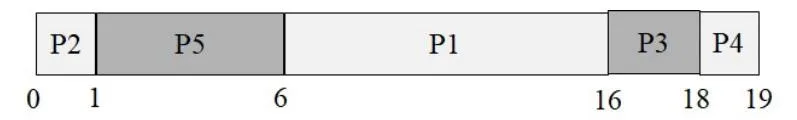

Gantt Chart:

| P3 | Idle | P1 | P5 | P2 | P4 | |

|---|---|---|---|---|---|---|

| 0 | 3 | 4 | 9 | 13 | 17 | 19 |

Process Metrics:

| Process | Arrival Time | Burst Time | Finish Time | Turnaround | Waiting Time |

|---|---|---|---|---|---|

| P1 | 4 | 5 | 9 | 5 | 0 |

| P2 | 6 | 4 | 17 | 11 | 7 |

| P3 | 0 | 3 | 3 | 3 | 0 |

| P4 | 6 | 2 | 19 | 13 | 11 |

| P5 | 5 | 4 | 13 | 8 | 4 |

Average Waiting Time = (0 + 7 + 0 + 11 + 4) / 5 = 4.4

Average Turnaround Time = (5 + 11 + 3 + 13 + 8) / 5 = 8

CPU Utilization = 94.73%

Elaboration (by LLM)

Step-by-Step Construction of the Gantt Chart:

FCFS schedules processes in arrival order. Let’s trace through each decision point:

- t=0: P3 is the only arrived process → P3 runs from t=0 to t=3.

- t=3: P3 finishes. Check who has arrived by t=3: only P3 (P1 arrives at t=4). → CPU goes idle.

- t=4: P1 arrives. No other process is waiting → P1 runs from t=4 to t=9.

- t=9: P1 finishes. Processes that arrived by t=9: P2 (at t=6), P4 (at t=6), P5 (at t=5). In arrival order: P5 first (t=5), then P2 and P4 (both t=6). → P5 runs from t=9 to t=13.

- t=13: P5 finishes. P2 and P4 both arrived at t=6. Tie broken by FCFS → P2 first. → P2 runs from t=13 to t=17.

- t=17: P2 finishes → P4 runs from t=17 to t=19.

Computing Each Metric:

Recall the formulas:

Applying to each process:

- P1: Turnaround = 9 − 4 = 5. Waiting = 5 − 5 = 0. (P1 arrived at t=4 and ran immediately at t=4.)

- P2: Turnaround = 17 − 6 = 11. Waiting = 11 − 4 = 7.

- P3: Turnaround = 3 − 0 = 3. Waiting = 3 − 3 = 0. (P3 ran immediately.)

- P4: Turnaround = 19 − 6 = 13. Waiting = 13 − 2 = 11.

- P5: Turnaround = 13 − 5 = 8. Waiting = 8 − 4 = 4.

Understanding CPU Utilization:

Total elapsed time = 19 units (from t=0 to t=19). But the CPU was idle from t=3 to t=4 (1 unit). So useful CPU time = 19 − 1 = 18 units.

The 1-unit idle gap occurred because no process was ready when P3 finished at t=3 and P1 didn’t arrive until t=4. In a busy system with more overlapping arrivals, idle gaps like this wouldn’t occur and utilization would approach 100%.

Problem 19: FCFS with Overhead

Section titled “Problem 19: FCFS with Overhead”Consider the following 6 processes in a system.

| Process | Arrival Time | Burst Time |

|---|---|---|

| P1 | 0 | 3 |

| P2 | 1 | 2 |

| P3 | 2 | 1 |

| P4 | 3 | 4 |

| P5 | 4 | 5 |

| P6 | 5 | 2 |

(a) Draw a Gantt chart illustrating the execution of these processes using FCFS. Compute the average waiting time and average turnaround time for each case.

Instructor Solution

Gantt Chart:

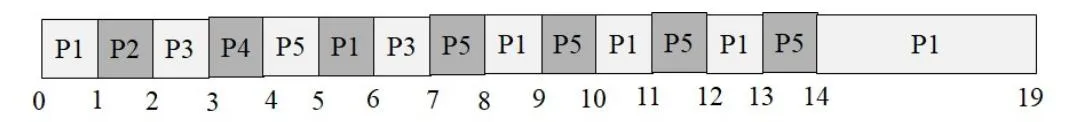

| P1 | P2 | P3 | P4 | P5 | P6 | |

|---|---|---|---|---|---|---|

| 0 | 3 | 5 | 6 | 10 | 15 | 17 |

Process Metrics:

| Process | Arrival Time | Burst Time | Finish Time | Turnaround | Waiting Time |

|---|---|---|---|---|---|

| P1 | 0 | 3 | 3 | 3 | 0 |

| P2 | 1 | 2 | 5 | 4 | 2 |

| P3 | 2 | 1 | 6 | 4 | 3 |

| P4 | 3 | 4 | 10 | 7 | 3 |

| P5 | 4 | 5 | 15 | 11 | 6 |

| P6 | 5 | 2 | 17 | 12 | 10 |

Average Waiting Time = (0 + 2 + 3 + 3 + 6 + 10) / 6 = 4

Average Turnaround Time = (3 + 4 + 4 + 7 + 11 + 12) / 6 = 6.83

Elaboration (by LLM)

Why There Are No Idle Gaps Here:

Unlike Problem 18, every process in this set arrives before the previous one finishes. P1 starts at t=0 and runs to t=3. P2 arrived at t=1, so it’s already waiting when P1 finishes. This pattern continues throughout — the CPU is never idle, which is why CPU utilization is 100%.

Tracing the Schedule:

FCFS is entirely determined by arrival order, so the execution sequence is simply P1 → P2 → P3 → P4 → P5 → P6.

| Step | Process | Starts | Ends | Reason |

|---|---|---|---|---|

| 1 | P1 | 0 | 3 | First to arrive |

| 2 | P2 | 3 | 5 | Arrived at t=1, waited |

| 3 | P3 | 5 | 6 | Arrived at t=2, waited |

| 4 | P4 | 6 | 10 | Arrived at t=3, waited |

| 5 | P5 | 10 | 15 | Arrived at t=4, waited |

| 6 | P6 | 15 | 17 | Arrived at t=5, waited |

Why P6 Has Such a Long Wait:

P6 arrives at t=5 but doesn’t run until t=15 — a wait of 10 units on a 2-unit burst. This illustrates the convoy effect vividly: P6 is a short job but must wait behind P4 (4 units) and P5 (5 units) that arrived before it. Under SJF, P6 would have been scheduled before P4 and P5, drastically reducing its wait time.

(b) Now assume that there is 1 unit of overhead when scheduling a process. Compute the efficiency of the scheduling algorithm; that is, the percentage of time where the CPU is doing useful work as opposed to doing context switching.

Instructor Solution

With 1 unit of scheduling overhead between each process transition, there will be 5 units of scheduling overhead total (between P1→P2, P2→P3, P3→P4, P4→P5, P5→P6). Total time = 17 + 5 = 22.

CPU Efficiency = (17 / 22) × 100 = 77.27%

The CPU is doing useful work 77.27% of the time.

Elaboration (by LLM)

What “Scheduling Overhead” Represents:

In a real system, switching from one process to another isn’t free. The OS must:

- Save the outgoing process’s registers, stack pointer, and program counter (into its PCB)

- Update the process’s state in the scheduler data structures

- Select the next process (run the scheduling algorithm)

- Load the incoming process’s saved state

- Flush/reload relevant CPU caches and TLB entries

This overhead is modeled here as a fixed 1 time unit per transition.

Counting the Transitions:

With 6 processes run sequentially, there are 5 transitions:

P1 → [overhead] → P2 → [overhead] → P3 → [overhead] → P4 → [overhead] → P5 → [overhead] → P6In general, for processes with overhead per transition:

Here: units of overhead.

Computing Efficiency:

Why This Matters for Quantum Selection:

This overhead model directly motivates why scheduling quanta can’t be made arbitrarily small. Consider if we had run these same 6 processes with Round Robin at a quantum of 1 unit — there would be many more context switches, dramatically reducing efficiency.

The general efficiency formula for Round Robin with quantum and context switch cost is approximately:

| Quantum | Overhead | Efficiency |

|---|---|---|

| 1 | 1 | 50% |

| 5 | 1 | 83% |

| 10 | 1 | 91% |

| 100 | 1 | 99% |

This shows why larger quanta improve efficiency — but at the cost of worse response time.

Problem 20: Preemptive SRTF with 4 Processes

Section titled “Problem 20: Preemptive SRTF with 4 Processes”Consider the following 4 processes in a system.

| Process | Arrival Time | Burst Time |

|---|---|---|

| P1 | 0 | 12 |

| P2 | 2 | 4 |

| P3 | 3 | 6 |

| P4 | 8 | 1 |

Draw a Gantt chart illustrating the execution of these processes using preemptive shortest remaining time first algorithm. Compute the average waiting time and average turnaround time.

Instructor Solution

Gantt Chart:

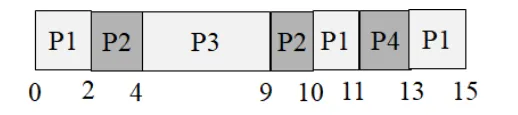

| P1 | P2 | P3 | P1 | P3 | |

|---|---|---|---|---|---|

| 0 | 2 | 6 | 8 | 17 | 23 |

Process Metrics:

| Process | Arrival Time | Burst Time | Finish Time | Turnaround | Waiting Time |

|---|---|---|---|---|---|

| P1 | 0 | 12 | 17 | 17 | 5 |

| P2 | 2 | 4 | 6 | 4 | 0 |

| P3 | 3 | 6 | 23 | 20 | 14 |

| P4 | 8 | 1 | 9 | 1 | 0 |

Average Waiting Time = (5 + 0 + 14 + 0) / 4 = 4.75

Average Turnaround Time = (17 + 4 + 20 + 1) / 4 = 10.5

Elaboration (by LLM)

How to Trace SRTF Step by Step:

SRTF requires re-evaluating which process has the shortest remaining burst at every arrival event. Let’s trace each key moment:

t=0: Only P1 is present. P1 starts running. P1 remaining = 12.

t=2: P2 arrives with burst = 4. Compare: P1 remaining = 10, P2 remaining = 4. P2 wins (4 < 10). P1 is preempted. P2 starts.

t=3: P3 arrives with burst = 6. Compare: P2 remaining = 3, P3 remaining = 6. P2 wins (3 < 6). P2 continues.

t=6: P2 finishes. Ready queue: P1 (remaining = 10), P3 (remaining = 6). P3 wins (6 < 10). P3 starts.

t=8: P4 arrives with burst = 1. Compare: P3 remaining = 4, P4 remaining = 1. P4 wins (1 < 4). P3 is preempted. P4 starts.

t=9: P4 finishes. Ready queue: P1 (remaining = 10), P3 (remaining = 4). P3 wins (4 < 10). P3 continues.

t=13: P3 finishes (ran from t=9 to t=13, using its remaining 4 units). Only P1 remains (remaining = 10). P1 runs from t=13 to… wait.

Visualizing Remaining Burst Times Over Time:

Time: 0 2 3 6 8 9 13 23 │ │ │ │ │ │ │ │P1: 12 10 10 10 10 10 0 - (ran 0-2, then 13-23)P2: - 4 3 0 - - - - (ran 2-6)P3: - - 6 6 4 4 0 - (ran 6-8, 9-13)P4: - - - - 1 0 - - (ran 8-9)Understanding the Waiting Times:

- P1 (waiting = 5): P1 ran for 2 units (t=0→2) before being preempted, then waited until t=13 to resume. Total wait = (13 − 2) − (time spent running before t=13) = elapsed time not running = (13 − 2) = 11… simplified: waiting = turnaround − burst = 17 − 12 = 5. ✅

- P2 (waiting = 0): Arrived at t=2, immediately preempted P1. No waiting at all.

- P3 (waiting = 14): Turnaround = 23 − 3 = 20. Waiting = 20 − 6 = 14. P3 spent a long time waiting because P4 (a 1-unit job) preempted it, and P1 ran for 10 units after t=13.

- P4 (waiting = 0): Arrived at t=8, immediately preempted P3. No waiting.

Key Insight — SRTF Rewards Short New Arrivals:

P2 and P4 both arrive and immediately run (waiting = 0) because their burst times are the shortest at their arrival moments. This is SRTF’s defining behavior: new short jobs jump to the front of the line, at the cost of longer jobs (like P3) experiencing extended waits.

Problem 21: Preemptive SRTF with Different Arrivals

Section titled “Problem 21: Preemptive SRTF with Different Arrivals”Consider the following 4 processes in a system.

| Process | Arrival Time | Burst Time |

|---|---|---|

| P1 | 0 | 10 |

| P2 | 3 | 6 |

| P3 | 7 | 1 |

| P4 | 8 | 3 |

Draw a Gantt chart illustrating the execution of these processes using preemptive shortest remaining time first algorithm. Compute the average waiting time and average turnaround time.

Instructor Solution

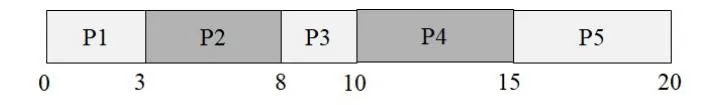

Gantt Chart:

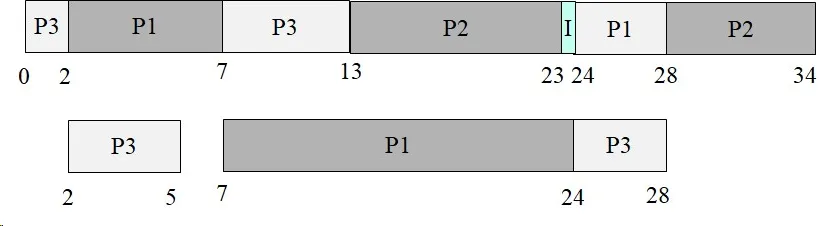

| P1 | P2 | P3 | P2 | P4 | P1 | |

|---|---|---|---|---|---|---|

| 0 | 3 | 3 | 7 8 | 3 1 | 0 14 | 1 20 |

Process Metrics:

| Process | Arrival Time | Burst Time | Finish Time | Turnaround | Waiting Time |

|---|---|---|---|---|---|

| P1 | 0 | 10 | 20 | 20 | 10 |

| P2 | 3 | 6 | 10 | 7 | 1 |

| P3 | 7 | 1 | 8 | 1 | 0 |

| P4 | 8 | 3 | 14 | 6 | 3 |

Average Waiting Time = (10+1+0+3) / 4 = 3.5

Average Turnaround Time = (20+7+1+6) / 4 = 8.5

Elaboration (by LLM)

What is SRTF?

Shortest Remaining Time First (SRTF) is the preemptive version of Shortest Job First (SJF). At every moment a new process arrives, the scheduler compares the remaining burst time of the currently running process against the burst time of the newcomer. If the newcomer is shorter, it immediately preempts the current process.

Step-by-Step Trace:

- t=0: Only P1 is available (burst=10). P1 runs.

- t=3: P2 arrives (burst=6). P1 has 7 units remaining. Since 6 < 7, P2 preempts P1. P1 is paused with 7 units left.

- t=7: P3 arrives (burst=1). P2 has 2 units remaining. Since 1 < 2, P3 preempts P2.

- t=8: P3 finishes. P4 arrives simultaneously (burst=3). P2 has 2 units remaining. Since 2 < 3, P2 resumes over P4.

- t=10: P2 finishes. Only P4 (burst=3) and P1 (7 remaining) are ready. P4 runs.

- t=14: P4 finishes. P1 resumes with 7 units remaining, running until t=20.

How Waiting Time is Calculated:

For example, P1: units of waiting — it was preempted early and had to sit idle while P2, P3, and P4 all ran.

Key Insight — Why P1 Suffers:

P1 starts first but has the longest burst time (10). Every subsequent arrival that is shorter preempts it, causing P1 to accumulate the most waiting time. This is a hallmark of SRTF: long jobs get repeatedly starved by shorter arrivals.

Turnaround vs. Waiting Time Summary:

| Process | Turnaround | Burst | Waiting |

|---|---|---|---|

| P1 | 20 | 10 | 10 |

| P2 | 7 | 6 | 1 |

| P3 | 1 | 1 | 0 |

| P4 | 6 | 3 | 3 |

P3 is ideal: it arrives, finds a shorter-than-current remaining time, runs immediately, and finishes with zero wait.

Problem 22: Priority Scheduling Non-preemptive vs. Preemptive

Section titled “Problem 22: Priority Scheduling Non-preemptive vs. Preemptive”Consider the following 5 processes in a system. Assume that lower values indicate higher priority.

| Process | Arrival Time | Burst Time | Priority |

|---|---|---|---|

| P1 | 0 | 11 | 2 |

| P2 | 5 | 28 | 0 |

| P3 | 12 | 2 | 3 |

| P4 | 2 | 10 | 1 |

| P5 | 9 | 16 | 4 |

(a) Draw the Gantt chart if we employ non-preemptive priority scheduling. Compute the turnaround time for each process.

Instructor Solution

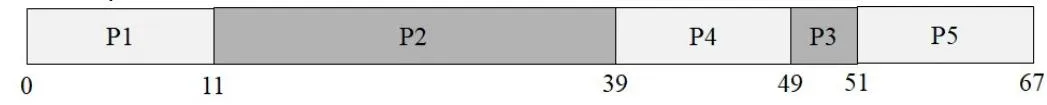

Process Metrics:

| Process | Arrival Time | Burst Time | Finish Time | Turnaround |

|---|---|---|---|---|

| P1 | 0 | 11 | 11 | 11 |

| P2 | 5 | 28 | 39 | 34 |

| P3 | 12 | 2 | 49 | 37 |

| P4 | 2 | 10 | 51 | 49 |

| P5 | 9 | 16 | 67 | 58 |

Elaboration (by LLM)

Key Concept — Non-Preemptive Priority:

In non-preemptive priority scheduling, once the CPU is handed to a process, it runs to completion (no interruption). When the CPU becomes free, the scheduler picks whichever ready process has the highest priority (lowest number here).

Step-by-step walkthrough:

- t=0: Only P1 is available (arrived at 0). P1 starts. Priority 2 — no competition yet.

- t=11: P1 finishes. By now, P2 (arrived t=5, priority 0) and P4 (arrived t=2, priority 1) are both in the ready queue. P2 wins with priority 0 (the best).

- t=39: P2 finishes. Queue now has P4 (priority 1), P3 (arrived t=12, priority 3), P5 (arrived t=9, priority 4). P4 wins with priority 1.

- t=49: P4 finishes. Queue has P3 (priority 3) and P5 (priority 4). P3 wins.

- t=51: P3 finishes. Only P5 remains — runs to completion at t=67.

Why turnaround times are large:

P4 (priority 1) is “starved” behind P2’s massive 28-unit burst, even though P4 had higher priority than P2. In non-preemptive scheduling, a long-running lower-priority process that started before higher-priority ones arrive cannot be displaced — the advantage only kicks in at the next scheduling decision.

Turnaround Time Formula:

For example, P4: , meaning P4 waited a long time despite having a fairly high priority (1), because P2 grabbed the CPU first at t=11.

(b) Draw the Gantt chart if we employ preemptive priority scheduling. Compute the turnaround time for each process.

Instructor Solution

| P1 | P4 | P2 | P4 | P1 | P3 | P1 | P5 |0 2 5 14 15 26 27 28 33 49 ...(Full schedule: P1[0–2], P4[2–5], P2[5–14], P4[14–15], P1[15–26], P3[26–28], P1[28–33], P2[33–?]… see metrics below)

Process Metrics:

| Process | Arrival Time | Burst Time | Finish Time | Turnaround |

|---|---|---|---|---|

| P1 | 0 | 11 | 49 | 49 |

| P2 | 5 | 28 | 33 | 27 |

| P3 | 12 | 2 | 51 | 39 |

| P4 | 2 | 10 | 40 | 38 |

| P5 | 9 | 16 | 67 | 58 |

Elaboration (by LLM)

Key Concept — Preemptive Priority (a.k.a. Priority with Preemption):

Every time a new process arrives, its priority is compared against the currently running process. If the new arrival has a higher priority (lower number), it immediately preempts the CPU — the running process is paused and pushed back onto the ready queue.

Step-by-step walkthrough:

- t=0: P1 starts (only process, priority 2).

- t=2: P4 arrives (priority 1 < 2). P4 preempts P1. P1 has used 2 of its 11 units, with 9 remaining.

- t=5: P2 arrives (priority 0 < 1). P2 preempts P4. P4 has used 3 of its 10 units, with 7 remaining.

- t=9: P5 arrives (priority 4). P2 (priority 0) stays — P5 cannot preempt.

- t=12: P3 arrives (priority 3). P2 (priority 0) stays — P3 cannot preempt.

- t=33: P2 finishes. Ready queue: P4 (pri 1, 7 remaining), P1 (pri 2, 9 remaining), P3 (pri 3, 2 remaining), P5 (pri 4, 16 remaining). P4 runs next.

- P4 finishes at t=40, then P1 resumes, then P3, then P5.

Comparing to Non-Preemptive:

| Process | Turnaround (Non-Preemptive) | Turnaround (Preemptive) |

|---|---|---|

| P1 | 11 | 49 ↑ worse |

| P2 | 34 | 27 ↓ better |

| P3 | 37 | 39 ↑ slightly worse |

| P4 | 49 | 38 ↓ better |

| P5 | 58 | 58 same |

Insight: P2 improves dramatically (34→27) since it immediately seizes the CPU when it arrives. P1 suffers (11→49) since it gets repeatedly preempted. This illustrates how preemptive priority scheduling strongly favors high-priority processes at the expense of low-priority ones that started early.

Problem 23: Priority Scheduling with Higher Priority Values

Section titled “Problem 23: Priority Scheduling with Higher Priority Values”Consider the following 5 processes in a system. Assume that higher values indicate higher priority.

| Process | Arrival Time | Burst Time | Priority |

|---|---|---|---|

| P1 | 0 | 4 | 2 |

| P2 | 1 | 3 | 3 |

| P3 | 2 | 1 | 4 |

| P4 | 3 | 5 | 5 |

| P5 | 4 | 2 | 5 |

(a) Draw the Gantt chart if we employ non-preemptive priority scheduling. Compute the turnaround time for each process.

Instructor Solution

| P1 | P4 | P5 | P3 | P2 |0 4 9 11 12 15Process Metrics:

| Process | Arrival Time | Burst Time | Finish Time | Turnaround |

|---|---|---|---|---|

| P1 | 0 | 4 | 4 | 4 |

| P2 | 1 | 3 | 15 | 14 |

| P3 | 2 | 1 | 12 | 10 |

| P4 | 3 | 5 | 9 | 6 |

| P5 | 4 | 2 | 11 | 7 |

Elaboration (by LLM)

Key Concept — Higher Value = Higher Priority:

This is the reverse of Problem 22. Here a priority of 5 is the best and 2 is the worst. The scheduling logic is otherwise identical.

Step-by-step walkthrough:

- t=0: Only P1 is available. P1 runs (non-preemptive, so it runs to completion regardless).

- t=4: P1 finishes. Queue now contains P2 (pri 3), P3 (pri 4), P4 (pri 5). P4 wins (highest priority = 5).

- t=9: P4 finishes. Queue has P2 (pri 3), P3 (pri 4), P5 (pri 5). P5 is tied with priority 5 — but P5 arrived at t=4, while P4 just finished. P5 runs.

- t=11: P5 finishes. Queue: P2 (pri 3), P3 (pri 4). P3 wins.

- t=12: P3 finishes. P2 runs last, finishing at t=15.

The “tie-breaking” observation:

P4 and P5 have equal priority (5). When both are in the queue simultaneously (after t=4), a tie-breaking rule (usually FCFS among equals — P4 arrived before P5) puts P4 first. P5 then runs after P4.

Why P2 (priority 3) has the worst turnaround despite a short burst:

P2 arrives early (t=1) but has the second-lowest priority. It must wait for P4, P5, and P3 to all finish before getting CPU time — a classic priority inversion of low-priority starvation.

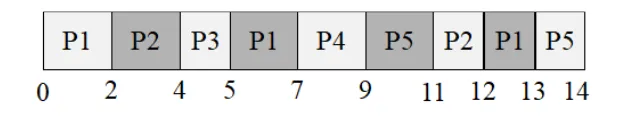

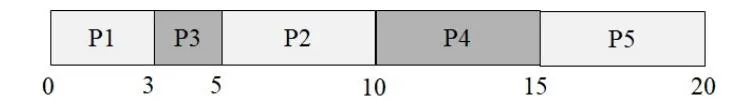

(b) Draw the Gantt chart if we employ preemptive priority scheduling. Compute the turnaround time for each process.

Instructor Solution

| P1 | P2 | P3 | P4 | P5 | P2 | P1 |0 1 2 3 8 10 11 15Process Metrics:

| Process | Arrival Time | Burst Time | Finish Time | Turnaround |

|---|---|---|---|---|

| P1 | 0 | 4 | 15 | 15 |

| P2 | 1 | 3 | 12 | 11 |

| P3 | 2 | 1 | 3 | 1 |

| P4 | 3 | 5 | 8 | 5 |

| P5 | 4 | 2 | 10 | 6 |

Elaboration (by LLM)

Key Concept — Preemptive with Highest = Best:

Each new arrival triggers a comparison. If the newcomer’s priority exceeds the running process’s priority, the newcomer immediately takes the CPU.

Step-by-step walkthrough:

- t=0: P1 starts (priority 2).

- t=1: P2 arrives (priority 3 > 2). P2 preempts P1. P1 has 3 units left.

- t=2: P3 arrives (priority 4 > 3). P3 preempts P2. P2 has 2 units left.

- t=3: P4 arrives (priority 5 > 4). P4 preempts P3. P3 has 0 units left… wait: P3 started at t=2 with 1 unit, so at t=3 P3 is done. P4 starts.

- t=4: P5 arrives (priority 5 = P4’s priority). Tie → P4 keeps the CPU (running process is not preempted on a tie).

- t=8: P4 finishes. P5 (pri 5, 2 units) runs next.

- t=10: P5 finishes. Queue: P2 (pri 3, 2 remaining), P1 (pri 2, 3 remaining). P2 runs.

- t=12: P2 finishes. P1 runs to completion at t=15.

P3’s extraordinary turnaround of 1:

P3 arrives at t=2, immediately preempts P2, runs its single unit, and finishes at t=3. Turnaround = 3−2 = 1. This is the best possible outcome for a 1-unit burst with the highest priority at arrival.

Cascading preemptions visualized:

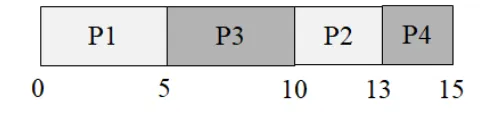

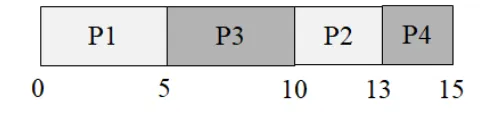

t=0 P1 startst=1 P2 arrives → preempts P1t=2 P3 arrives → preempts P2t=3 P4 arrives → preempts P3 (but P3 just finished anyway)t=4 P5 arrives → tie with P4, no preemptiont=8 P4 done → P5 runst=10 P5 done → P2 resumest=12 P2 done → P1 resumest=15 P1 doneProblem 24: Round Robin with Quantum 2

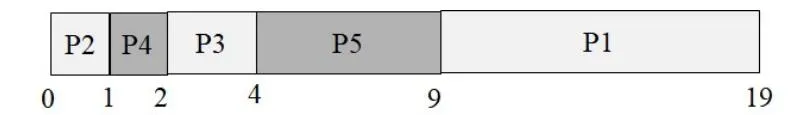

Section titled “Problem 24: Round Robin with Quantum 2”Consider the following 5 processes in a system.

| Process | Arrival Time | Burst Time |

|---|---|---|

| P1 | 0 | 5 |

| P2 | 1 | 3 |

| P3 | 2 | 1 |

| P4 | 3 | 2 |

| P5 | 4 | 3 |

Draw a Gantt chart illustrating the execution of these processes using round robin scheduling with a quantum of 2. Compute the average waiting time and average turnaround time. Note: At any given time, a newly arriving process is put to the back of the ready queue before the executing process is preempted. That is, the preempted process is always the last process in the ready queue.

Instructor Solution

Process Metrics:

| Process | Arrival Time | Burst Time | Finish Time | Turnaround | Waiting Time |

|---|---|---|---|---|---|

| P1 | 0 | 5 | 13 | 13 | 8 |

| P2 | 1 | 3 | 12 | 11 | 8 |

| P3 | 2 | 1 | 5 | 3 | 2 |

| P4 | 3 | 2 | 9 | 6 | 4 |

| P5 | 4 | 3 | 14 | 10 | 7 |

Average Waiting Time = (8+8+2+4+7) / 5 = 5.8

Average Turnaround Time = (13+11+3+6+10) / 5 = 8.6

Elaboration (by LLM)

Key Concept — Round Robin (RR):

RR is a preemptive scheduling algorithm that gives each process a fixed time slice (quantum). After the quantum expires, the running process is placed at the back of the ready queue and the next process runs. This ensures fairness — no process monopolizes the CPU.

The critical tie-breaking rule (as stated in the problem):

A newly arriving process is added to the ready queue before the preempted process is re-enqueued.

This means: when a quantum expires at time , any process that arrived at exactly time joins the queue first, and only then is the preempted process appended at the back.

Ready Queue Trace:

| Time | Running | Event | Queue after event (front→back) |

|---|---|---|---|

| 0 | P1 | P1 starts | [] |

| 1 | P1 | P2 arrives | [P2] |

| 2 | P2 | P1 quantum expires; P3 arrives at t=2 → P3 joins first, then P1 re-enqueued | [P3, P1] |

| 3 | P2 | P4 arrives | [P3, P1, P4] |

| 4 | P3 | P2 quantum expires; P5 arrives at t=4 → P5 joins first, then P2 re-enqueued | [P1, P4, P5, P2] |

| 5 | P1 | P3 finishes (only 1 unit needed) | [P4, P5, P2] |

| 7 | P4 | P1 quantum expires; P1 re-enqueued | [P5, P2, P1] |

| 9 | P5 | P4 finishes (exactly 2 units used) | [P2, P1] |

| 11 | P2 | P5 quantum expires; P5 re-enqueued | [P1, P5] |

| 12 | P1 | P2 finishes (1 unit remaining used) | [P5] |

| 13 | P5 | P1 finishes (1 unit remaining used) | [] |

| 14 | — | P5 finishes (1 unit remaining used) | [] |

Tracing each process’s CPU slots:

P1 (burst = 5):

- t=0→2: runs 2 units (3 left)

- t=5→7: runs 2 units (1 left)

- t=12→13: runs 1 unit → done ✅

P2 (burst = 3):

- t=2→4: runs 2 units (1 left)

- t=11→12: runs 1 unit → done ✅

P3 (burst = 1):

- t=4→5: runs 1 unit → done ✅

P4 (burst = 2):

- t=7→9: runs 2 units → done ✅

P5 (burst = 3):

- t=9→11: runs 2 units (1 left)

- t=13→14: runs 1 unit → done ✅

Waiting Time Calculation:

| Process | Turnaround | Burst | Waiting |

|---|---|---|---|

| P1 | 13 | 5 | 8 |

| P2 | 11 | 3 | 8 |

| P3 | 3 | 1 | 2 |

| P4 | 6 | 2 | 4 |

| P5 | 10 | 3 | 7 |

Effect of the tie-breaking rule:

The rule — new arrivals join before the preempted process — is what causes P3 to run at t=4 instead of P1. At t=2, P3 arrives at the exact moment P1’s quantum expires. P3 is enqueued first, pushing P1 further back. Without this rule (if P1 re-enqueued first), P1 would run again at t=4 and P3 would wait longer. This rule generally benefits newly arriving processes at the cost of the process that just finished its quantum.

Quantum size trade-off:

With quantum=2 here, short jobs like P3 (burst=1) and P4 (burst=2) finish within one or two scheduling rounds, while longer jobs like P1 (burst=5) cycle through the queue multiple times.

Problem 25: FCFS vs. Round Robin

Section titled “Problem 25: FCFS vs. Round Robin”Consider the following 3 processes in a system.

| Process | Arrival Time | Burst Time |

|---|---|---|

| P1 | 0 | 5 |

| P2 | 1 | 7 |

| P3 | 3 | 4 |

Draw a Gantt chart illustrating the execution of these processes using FCFS and round robin scheduling with a quantum of 2. Compute the average waiting time and average turnaround time.

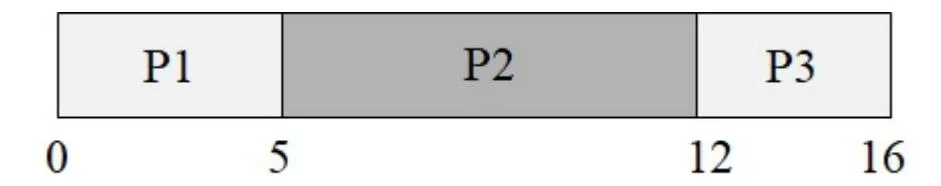

(a) FCFS

Instructor Solution

Process Metrics:

| Process | Arrival Time | Burst Time | Finish Time | Turnaround | Waiting Time |

|---|---|---|---|---|---|

| P1 | 0 | 5 | 5 | 5 | 0 |

| P2 | 1 | 7 | 12 | 11 | 4 |

| P3 | 3 | 4 | 16 | 13 | 9 |

Average Waiting Time = (0+4+9) / 3 = 4.33

Average Turnaround Time = (5+11+13) / 3 = 9.66

Elaboration (by LLM)

Key Concept — First Come First Served (FCFS):

FCFS is the simplest scheduling algorithm: processes are run in the order they arrive, each to completion, with no preemption. It is also known as FIFO scheduling.

Step-by-step walkthrough:

- t=0: P1 arrives and immediately starts (it’s the only process). Runs for 5 units.

- t=5: P1 finishes. Both P2 (arrived t=1) and P3 (arrived t=3) are waiting. P2 arrived first → P2 runs for 7 units.

- t=12: P2 finishes. P3 runs for 4 units.

- t=16: P3 finishes. All done.

Waiting Time breakdown:

- P1: arrived t=0, started t=0 → waited 0 units.

- P2: arrived t=1, started t=5 → waited 4 units.

- P3: arrived t=3, started t=12 → waited 9 units.

The “Convoy Effect”:

P3 has a burst of only 4 units, but it gets stuck behind P2’s 7-unit burst. If P3 had arrived first, the average waiting time would be much lower. This phenomenon — short processes getting blocked behind long ones — is called the convoy effect, and is a major weakness of FCFS.

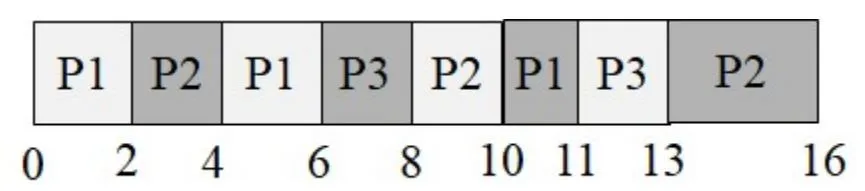

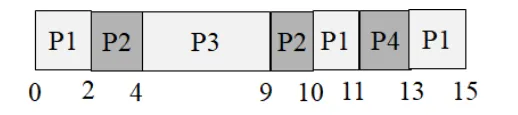

(b) Round Robin with quantum of 2

Instructor Solution

Process Metrics:

| Process | Arrival Time | Burst Time | Finish Time | Turnaround | Waiting Time |

|---|---|---|---|---|---|

| P1 | 0 | 5 | 11 | 11 | 6 |

| P2 | 1 | 7 | 16 | 15 | 8 |

| P3 | 3 | 4 | 13 | 10 | 6 |

Average Waiting Time = (6+8+6) / 3 = 6.66

Average Turnaround Time = (11+15+10) / 3 = 12

Elaboration (by LLM)

Ready Queue Trace (quantum = 2):

| Time | Running | Queue after event |

|---|---|---|

| 0 | P1 | [] (P1 runs) |

| 1 | P1 | [P2] (P2 arrives) |

| 2 | P2 | [P1] (P1 used 2 of 5, returns; P2 starts) |

| 3 | P2 | [P1, P3] (P3 arrives) |

| 4 | P1 | [P3] (P2 used 2 of 7, returns; P1 resumes) |

| 6 | P3 | [P2] (P1 used 2 more = 4 total of 5, returns; P3 starts) |

| 8 | P2 | [P1] (P3 used 2 of 4, returns; P2 resumes) |

| 10 | P1 | [P3] (P2 used 2 more = 4 total of 7, returns; P1 resumes) |

| 11 | P3 | [P2] (P1 finishes — only 1 unit left; P3 resumes) |

| 13 | P2 | [] (P3 finishes; P2 resumes) |

| 16 | — | [] (P2 finishes) |

FCFS vs. RR Comparison:

| Metric | FCFS | RR (q=2) | Winner |

|---|---|---|---|

| Avg Waiting Time | 4.33 | 6.66 | FCFS |

| Avg Turnaround | 9.66 | 12 | FCFS |

Surprising result: FCFS actually outperforms RR here! This happens because the processes have relatively similar burst times. RR’s overhead (extra context switches, fragmented execution) hurts more than it helps when bursts are not drastically different in size.

When does RR shine?

RR gives the biggest benefit when:

- There are many short interactive jobs mixed with long CPU-bound ones.

- Responsiveness (getting a first response quickly) matters more than raw throughput.

- Burst times vary widely — short jobs finish early without being held up by long ones.

Problem 26: Threading vs. Single Process

Section titled “Problem 26: Threading vs. Single Process”Consider the following function named “Work”, which performs I/O for 1 time unit and then performs computation for 1 time unit within a loop.

void Work(int numOfIterations){ for (int i=0; i < numOfIterations; i++){ DoIO(); // I/O takes 1 time unit Compute(); // Computation takes 1 time unit } //end-for} //end-Work

main1(int argc, char *argv[]){ Work(10); // Do the work in a single-threaded process} //end-mainmain2(int argc, char *argv[]){ CreateThread(Work(5)); // Create a thread to run Work with numOfIterations = 5 CreateThread(Work(5)); // Create a thread to run Work with numOfIterations = 5 wait_until_both_threads_are_done();} //end-main(a) Assume that the OS does NOT support threads. So the programmer designs its main function to be single-threaded and codes main1() shown above. Assuming that this is the only process in the system and the machine has 1 CPU, how long does the program take to finish? Answer the same question when the machine has 2 CPUs.

Instructor Solution

| Time | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 | 13 | 14 | 15 | 16 | 17 | 18 | 19 | 20 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| CPU | T1 | T1 | T1 | T1 | T1 | T1 | T1 | T1 | T1 | T1 | ||||||||||

| I/O | T1 | T1 | T1 | T1 | T1 | T1 | T1 | T1 | T1 | T1 |

It takes 20 units of time for the process (T1) to finish executing. If we had 2 CPUs, T1 would still have taken 20 units of time because the second CPU would have to stay idle as there is only one thread to execute in the system.

Elaboration (by LLM)

Why 20 units?

Each iteration of Work does:

DoIO()→ 1 unit on the I/O deviceCompute()→ 1 unit on the CPU

With 10 iterations: units total.

Because there is only one thread, the I/O and CPU phases alternate with no overlap:

Iteration: 1 2 3 ... 10I/O: [===] [===] [===] ... [===]CPU: [===] [===] ... [===]Time: 1 2 3 4 5 6 ... 19 20Why a second CPU doesn’t help:

Parallelism requires multiple independent execution units (threads/processes). With one thread, only one stream of instructions exists. A second CPU has nothing to run — it sits idle. Adding CPUs helps only when there are enough threads to keep them busy.

Key Insight:

where is the serial fraction and is the number of CPUs (Amdahl’s Law). With a single thread, → Speedup = 1 regardless of .

(b) Assume now that the OS supports kernel-level threads. So the programmer designs its main function to be multi-threaded and codes main2() shown above. Assuming that this is the only process in the system and the machine has 1 CPU, how long does the program take to finish? Answer the same question when the machine has 2 CPUs. Assume that the threads can NOT perform I/O in parallel.

Instructor Solution

| Time | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 |

|---|---|---|---|---|---|---|---|---|---|---|---|

| CPU | T1 | T2 | T1 | T2 | T1 | T2 | T1 | T2 | T1 | T2 | |

| I/O | T1 | T2 | T1 | T2 | T1 | T2 | T1 | T2 | T1 | T2 |

T1 finishes at time 10 and T2 finishes at time 11. So, the program finishes at time 11.

If we have two CPUs, we can theoretically schedule both T1 and T2 in parallel, but because both T1 and T2 are using the same I/O device and I/O can NOT be performed in parallel, we will get the same schedule and the program will still finish at time 11. Notice that one the CPUs will still be idle as at any point in time, only one of the threads is ready to execute while the other is doing I/O.

Elaboration (by LLM)

Why 11 units instead of 20?

With two threads, the I/O and CPU phases of T1 and T2 interleave. While T1 is waiting for I/O, T2 can use the CPU — and vice versa. This is the classic I/O-CPU overlap pattern.

T1: [I/O][CPU][I/O][CPU][I/O][CPU][I/O][CPU][I/O][CPU]T2: [I/O][CPU][I/O][CPU][I/O][CPU][I/O][CPU][I/O][CPU]Time: 1 2 3 4 5 6 7 8 9 10 11T1 starts I/O at t=1, then CPU at t=2, then hands I/O to T2 at t=2, and so on. They perfectly interleave because each has a 1-unit I/O followed by a 1-unit CPU.

The I/O bottleneck (1 CPU vs. 2 CPUs):

Even with 2 CPUs, the single I/O device is the bottleneck. At any point, one thread is doing I/O and the other is doing CPU work. A second CPU cannot speed this up because:

- The I/O device serializes all I/O requests.

- Only one thread is ever CPU-ready at a time (the other is always blocked on I/O).

Speedup Summary:

| Scenario | Time | Speedup vs. Single-threaded |

|---|---|---|

| main1, 1 CPU | 20 | 1× (baseline) |

| main2, 1 CPU | 11 | ~1.82× |

| main2, 2 CPUs | 11 | ~1.82× (I/O bound, no gain) |

General principle: Threading helps most when you have I/O-bound work that can overlap with CPU work. The speedup is limited by whichever resource is the bottleneck — here, the single I/O device caps the benefit at ~1.82×.

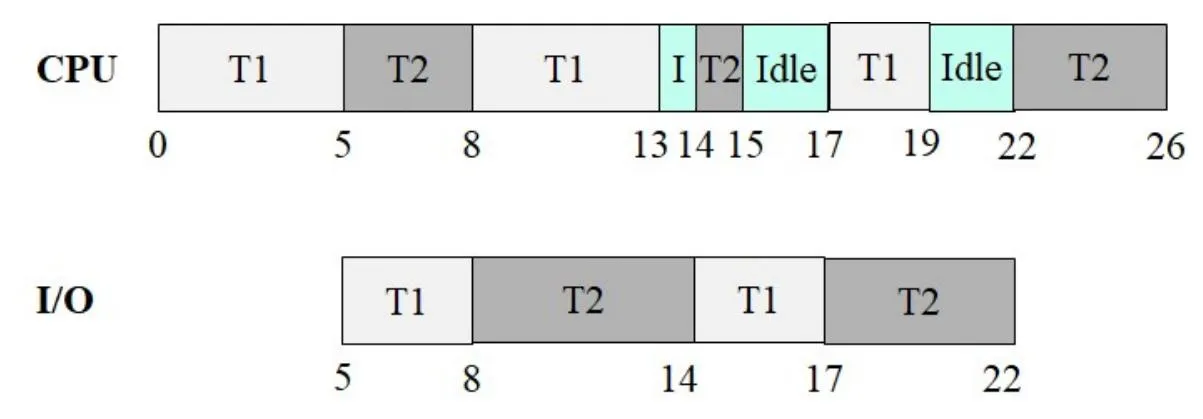

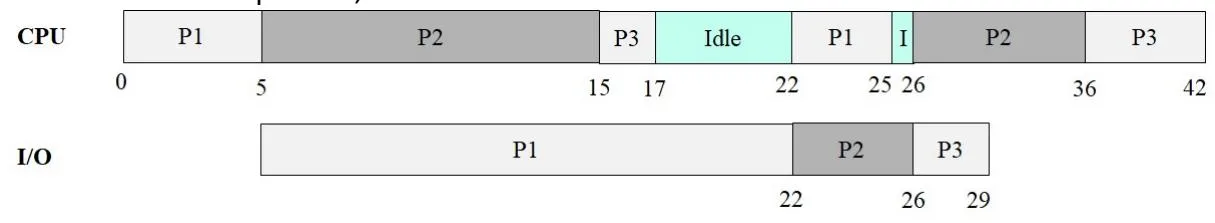

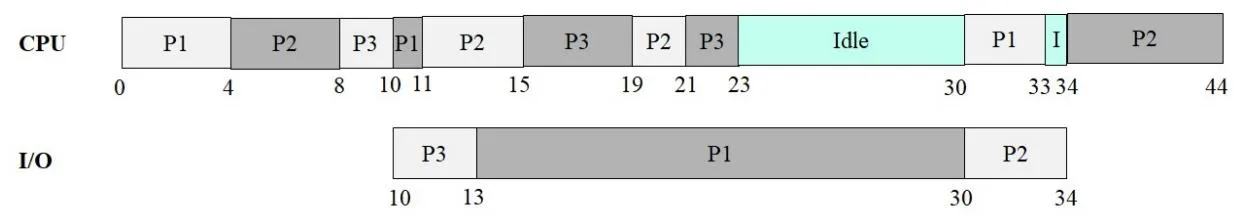

Problem 27: Kernel Threads FCFS

Section titled “Problem 27: Kernel Threads FCFS”Consider the following two threads T1 and T2 that belong to the same process P. Assume T1 is created first, followed by T2. Further assume that there is a single I/O device, which means that parallel I/O is NOT possible.

| T1 | T2 |

|---|---|

| Compute(5) | Compute(3) |

| I/O(3) | I/O(6) |

| Compute(5) | Compute(1) |

| I/O(3) | I/O(5) |

| Compute(2) | Compute(4) |

Assume that threads T1 and T2 are implemented as kernel threads. Draw the Gantt chart for First Come First Served (FCFS) scheduling. Compute the turnaround time for T1 and T2. Also compute the CPU utilization.

Instructor Solution

Process Metrics:

| Process | Arrival Time | Finish Time | Turnaround |

|---|---|---|---|

| T1 | 0 | 19 | 19 |

| T2 | 0 | 26 | 26 |

CPU Utilization:

Elaboration (by LLM)

Key Concept — FCFS with Kernel Threads and a Single I/O Device:

With kernel threads, the OS scheduler treats each thread as an independent schedulable entity. When a thread blocks for I/O, another thread can run on the CPU. However, since there is only one I/O device, I/O requests are serialized — only one thread can use the I/O device at a time.

Full execution trace:

| Time Interval | CPU | I/O Device | Notes |

|---|---|---|---|

| 0 – 5 | T1 | — | T1 computes for 5 units |

| 5 – 8 | T2 | T1 | T1 starts I/O; T2 starts computing (3 units) |

| 8 – 13 | T1 (1 unit of compute remains… wait) | T2 | T1 done with I/O at 8, T2 I/O starts at 8 |

Let’s redo this more carefully:

- t=0–5: T1 runs Compute(5). T2 is ready but FCFS puts T1 first.

- t=5–8: T1 does I/O(3). T2 runs Compute(3) on CPU (T2 can run while T1 is in I/O).

- t=8: T1 finishes I/O, T2 finishes Compute. T2 now needs I/O(6). T1 needs Compute(5). I/O device goes to T2 (T1’s I/O finished first, so T2 queued up). T1 resumes on CPU.

- t=8–13: T1 runs Compute(5). T2 does I/O(6) — but T2’s I/O takes until t=14.

- t=13–14: T1 finishes Compute(5), needs I/O(3). I/O device still busy with T2 until t=14. T1 waits (I/O queue).