06 - Process Synchronization

Problem 1: Critical Section and Mutual Exclusion

Section titled “Problem 1: Critical Section and Mutual Exclusion”What’s a Critical Section? What is mutual exclusion? Write a simple code to show how you would achieve mutual exclusion in a critical section using a mutex lock.

A critical section is a specific segment of code that accesses shared resources—such as global variables, shared data structures—that must not be concurrently accessed by more than one thread or process. If multiple threads execute this section at the same time, it can lead to unpredictable results or race conditions. Mutual exclusion is the programmatic property that ensures this safety; it guarantees that if one thread is executing in its critical section, no other threads are allowed to enter theirs. Essentially, it’s the “one at a time” rule that prevents data corruption in a multi-threaded environment.

Instructor Solution

pthread_mutex_t mutex;pthread_mutex_lock(&mutex);<Critical Section>pthread_mutex_unlock(&mutex);Elaboration (by LLM)

What Is a Critical Section?

A critical section is any region of code where a thread reads from or writes to a shared resource. The danger is simple: if two threads interleave their execution inside this region, the shared state can become inconsistent.

Consider a global counter count being incremented by two threads simultaneously:

// Thread A // Thread Bint temp = count; int temp = count; // Both read 5temp = temp + 1; temp = temp + 1; // Both compute 6count = temp; count = temp; // Both write 6 — one increment lost!The fix is to designate the increment as a critical section and enforce mutual exclusion around it.

What Is Mutual Exclusion?

Mutual exclusion (mutex) is the guarantee that at most one thread is executing inside a critical section at any given time. It is the foundational property that prevents race conditions.

How a Mutex Lock Enforces It

The three-line pattern in the solution is the canonical structure:

pthread_mutex_t mutex; // (1) Declare the mutex (shared between threads)

pthread_mutex_lock(&mutex); // (2) Acquire — blocks if another thread holds the lock/* Critical Section */ // (3) Safe to access shared resources herepthread_mutex_unlock(&mutex); // (4) Release — allows the next waiting thread to proceedWhat happens on lock:

- If the mutex is free → the calling thread acquires it and continues

- If the mutex is held → the calling thread is put to sleep by the OS until the lock is released

What happens on unlock:

- The mutex is marked free

- One of the sleeping threads (if any) is woken up and given the lock

Full Working Example

#include <pthread.h>#include <stdio.h>

int count = 0;pthread_mutex_t mutex = PTHREAD_MUTEX_INITIALIZER;

void *increment(void *arg) { for (int i = 0; i < 100000; i++) { pthread_mutex_lock(&mutex); // Enter critical section count++; // Safe: only one thread at a time pthread_mutex_unlock(&mutex); // Exit critical section } return NULL;}

int main() { pthread_t t1, t2; pthread_create(&t1, NULL, increment, NULL); pthread_create(&t2, NULL, increment, NULL); pthread_join(t1, NULL); pthread_join(t2, NULL); printf("Final count: %d\n", count); // Always 200000}Problem 2: Atomic Instructions

Section titled “Problem 2: Atomic Instructions”What is atomic instruction? In Intel architecture, what’s the name of the atomic instruction used to implement a mutex lock?

Instructor Solution

An atomic instruction is a low-level CPU operation that executes as a single, indivisible unit, meaning it either completes fully or fails entirely with no observable intermediate state. In multi-core systems, these instructions are crucial because they prevent other processors from interfering with a memory location while the operation is in progress, typically by briefly locking the system bus or a specific cache line. In Intel (x86/x64) architecture, the primary atomic instruction used to implement a mutex lock is XCHG Exchange.

Elaboration (by LLM)

Why “Atomic” Matters

Most high-level operations that look like a single step are actually multiple machine instructions under the hood. For example, count++ compiles to three steps: load, increment, store. Between any two of these steps, the OS can context-switch another thread in, causing a race condition.

An atomic instruction collapses a multi-step operation into a single, hardware-guaranteed indivisible step. No thread can observe it mid-execution.

How the CPU Enforces Atomicity

On x86/x64 Intel hardware, atomicity is enforced via one of two mechanisms:

- Bus locking: The CPU asserts a

LOCK#signal on the memory bus for the duration of the instruction, blocking all other cores from accessing memory - Cache line locking (preferred on modern CPUs): If the target memory address is in the L1/L2 cache, the CPU locks only that cache line rather than the entire bus, which is much faster

The XCHG Instruction

XCHG (Exchange) atomically swaps the value in a register with a value in memory. When used with a memory operand, it implicitly asserts the LOCK# prefix — even without explicitly writing LOCK XCHG.

; Spinlock acquire using XCHG; Assume: EAX = 1 (desired "locked" value), [lock_var] starts at 0

spin_loop: MOV EAX, 1 ; Load "locked" value into register XCHG EAX, [lock_var] ; Atomically swap: EAX ← old value, [lock_var] ← 1 TEST EAX, EAX ; Was the old value 0 (was it unlocked)? JNZ spin_loop ; If not, spin and try again

; --- Critical Section ---

MOV [lock_var], 0 ; Release: write 0 back to memoryAtomic Operation Summary

| Instruction | Operation | Atomicity |

|---|---|---|

XCHG reg, mem | Swap register ↔ memory | Always atomic (implicit LOCK) |

LOCK CMPXCHG | Compare-and-swap | Atomic with LOCK prefix |

LOCK XADD | Fetch-and-add | Atomic with LOCK prefix |

LOCK INC | Increment memory | Atomic with LOCK prefix |

Problem 3: Test-and-Set Instruction

Section titled “Problem 3: Test-and-Set Instruction”What’s Test-and-Set instruction? What is it used for? Briefly explain.

Instructor Solution

A Test-and-Set (TSL) instruction is an atomic hardware primitive used to write a value to a memory location and return its old value as a single, indivisible step. It works by “testing” the current state of a flag (usually checking if it is 0 or 1) and “setting” it to a locked state (1) simultaneously. This prevents a race condition where two processes might see a “0” at the same time and both attempt to enter a critical section. It is primarily used to implement low-level synchronization primitives, such as spinlocks, in operating systems and multi-threaded applications. By checking the returned old value, a process can determine if it successfully acquired the lock (if the old value was 0) or if it must continue waiting in a loop (if the old value was 1).

Elaboration (by LLM)

The Core Problem TSL Solves

Without atomicity, a naive “check then set” lock has a fatal race window:

// BROKEN — non-atomic, two threads can both pass the check!while (lock == 1) {} // (1) Thread A sees lock=0, exits looplock = 1; // (2) Thread B ALSO sees lock=0 before A sets it!// Both threads now believe they hold the lock — disaster!TSL eliminates this window by making steps (1) and (2) a single uninterruptible hardware operation.

TSL Pseudocode

// Conceptual pseudocode — executes as ONE atomic hardware instructionint TestAndSet(int *lock) { int old = *lock; // "Test": read the current value *lock = 1; // "Set": unconditionally write 1 (locked) return old; // Return what we found}Using TSL to Build a Spinlock

int lock = 0; // 0 = free, 1 = locked

void acquire(int *lock) { // Spin until we observe old value of 0 (meaning WE set it from 0→1) while (TestAndSet(lock) == 1) { // lock was already 1 — someone else holds it, keep spinning } // We got old value 0 — we successfully acquired the lock}

void release(int *lock) { *lock = 0; // Simple write; no atomicity needed for release}Step-by-Step Execution Trace

Consider two threads A and B racing to acquire the lock:

| Time | Thread A | Thread B | lock value |

|---|---|---|---|

| T1 | TestAndSet(&lock) → returns 0, sets lock=1 | — | 1 |

| T2 | Enters critical section ✅ | TestAndSet(&lock) → returns 1 (spins) | 1 |

| T3 | In critical section | Spinning… | 1 |

| T4 | release() → lock=0 | TestAndSet(&lock) → returns 0, sets lock=1 | 1 |

| T5 | Done | Enters critical section ✅ | 1 |

How It Differs from XCHG

TSL and XCHG are functionally equivalent in purpose. On x86, XCHG is the physical realization of TSL. On other architectures (e.g., ARM), the equivalent is LDREX/STREX (load/store exclusive).

Problem 4: Spinlocks in Single vs. Multiprocessor Systems

Section titled “Problem 4: Spinlocks in Single vs. Multiprocessor Systems”Briefly explain why spinlocks are not appropriate for single processor systems yet are often used in multiprocessor systems.

Instructor Solution

Spinlocks are inappropriate for single-processor systems because if a thread “spins” while waiting for a resource, it consumes 100% of the CPU’s cycles, preventing the very thread that holds the lock from running and releasing it. On a single core, the only way for the lock-holder to finish is if the spinning thread is context-switched out, making the busy-waiting period entirely wasted time. Conversely, spinlocks are favored in multiprocessor systems because the lock-holder can run on a different core simultaneously. If the critical section is short, it is often more efficient to have a thread spin for a few nanoseconds than to incur the heavy overhead of a context switch—which involves saving registers and flushing caches required to put the thread to sleep and wake it up later.

Elaboration (by LLM)

What Does “Spinning” Mean?

A spinlock acquires the lock via a tight busy-wait loop — the thread actively checks the lock condition over and over instead of going to sleep:

// The thread never yields; it hammers the CPU checking the lockwhile (TestAndSet(&lock) == 1) { // Do nothing — just spin and check again}Single-Processor: A Self-Defeating Loop

On a single-core machine, there is only one execution stream at a time. The moment a thread starts spinning, it occupies the CPU 100%. Consider what this means:

Core 0: [ Thread A spins... spins... spins... ] ← uses ALL CPU time Thread B (lock holder) CAN'T RUNThread B — the one that actually holds the lock and needs to release it — cannot make any progress because it never gets CPU time. The only escape is a timer interrupt that forces a context switch, at which point the spinning was entirely wasteful. You’d have been better off blocking immediately.

Multiprocessor: Parallel Progress

On a multiprocessor system, the lock-holder and the spinner can execute simultaneously on different cores:

Core 0: [ Thread A spins... ] ← polls the lock variableCore 1: [ Thread B in critical section → releases lock ] ← runs in parallelThread A checks the lock, sees it free the moment Thread B releases it on Core 1, and immediately enters. No context switch needed at all. This is the ideal case.

The Context-Switch Cost Comparison

| Spinlock | Blocking (Mutex/Semaphore) | |

|---|---|---|

| Acquire (lock free) | ~1–10 ns | ~100–1000 ns |

| Acquire (lock held, short wait) | ~10–100 ns | ~10–100 µs (sleep + wake) |

| Acquire (lock held, long wait) | Wastes CPU cycles | Efficient — thread sleeps |

| CPU usage while waiting | 100% | ~0% |

When Spinlocks Win in Multiprocessor Systems

Spinlocks are the right choice when:

- The critical section is short (a few instructions)

- Lock contention is low (threads rarely collide)

- The overhead of putting a thread to sleep and waking it up would exceed the wait time

Problem 5: Race Condition

Section titled “Problem 5: Race Condition”What’s race condition? Briefly explain.

Instructor Solution

A race condition occurs when the timing or order of execution in a multi-threaded or concurrent system affects the final outcome, leading to unpredictable and often erroneous results. It happens when multiple threads access and manipulate shared data simultaneously, and the final state depends on which thread “wins the race” to finish its operation first. For example, if two threads try to increment a shared counter at the exact same moment, they might both read the same initial value, add one, and write back the same result, causing one of the increments to be lost entirely.

Elaboration (by LLM)

The Classic Counter Race

Consider count++, which looks like one operation but compiles to three machine instructions:

// High-level:count++;

// What the CPU actually does:// (1) LOAD: temp = count ← read from memory// (2) ADD: temp = temp + 1 ← compute// (3) STORE: count = temp ← write back to memoryIf two threads execute this concurrently, they can interleave in a broken way:

| Step | Thread A | Thread B | count in memory |

|---|---|---|---|

| 1 | LOAD → temp_A = 5 | — | 5 |

| 2 | — | LOAD → temp_B = 5 | 5 |

| 3 | ADD → temp_A = 6 | — | 5 |

| 4 | — | ADD → temp_B = 6 | 5 |

| 5 | STORE → count = 6 | — | 6 |

| 6 | — | STORE → count = 6 | 6 ← should be 7! |

One increment is silently lost. This is a race condition.

Key Characteristics of a Race Condition

- Non-deterministic: The bug may only appear under specific timing conditions (high load, certain CPU speeds, scheduler behavior). It can be nearly impossible to reproduce reliably.

- Involves shared mutable state: Read-only shared data cannot race. The danger is always concurrent writes or a mix of reads and writes.

- No guaranteed error signal: The program often doesn’t crash — it just silently produces wrong results, making races especially insidious.

A Broader Example: Read-Modify-Write Pattern

Any “read → modify → write” sequence on shared data is a race condition candidate:

// Example 1: Counter incrementbalance = balance + deposit; // Read-modify-write: RACE

// Example 2: Conditional updateif (list != NULL) { // Check node = list->next; // Use — another thread may have freed list!} // TIME-OF-CHECK vs TIME-OF-USE (TOCTOU) race

// Example 3: Lazy initializationif (instance == NULL) { // Two threads can both see NULL instance = new Singleton(); // Both create an instance — one is leaked}How to Detect Race Conditions

- Thread sanitizers: Tools like

ThreadSanitizer(TSan, built into GCC/Clang with-fsanitize=thread) instrument your code at compile time to detect concurrent accesses at runtime. - Stress testing: Run the program under high concurrency and repetition to increase the probability of triggering a race.

- Static analysis: Tools like

Helgrind(part of Valgrind) can find potential lock-order violations and unprotected accesses.

Problem 6: Critical Section Requirements

Section titled “Problem 6: Critical Section Requirements”List 4 requirements of a critical section.

Instructor Solution

To ensure that concurrent processes function correctly and safely, a solution to the critical section problem must satisfy these four fundamental requirements:

(1) Mutual Exclusion: If a process is executing in its critical section, no other processes can be executing in their critical sections for the same resource at the same time.

(2) Progress: If no process is in its critical section and some processes wish to enter, the selection of the next process to enter cannot be postponed indefinitely, and only those processes not in their remainder section can participate in the decision.

(3) Bounded Waiting (No Starvation): There must be a limit on the number of times other processes are allowed to enter their critical sections after a process has made a request to enter its own and before that request is granted, preventing starvation.

(4) Performance: The overhead of entering and exiting the critical section must be small with respect to the work being done within it.

Elaboration (by LLM)

These four requirements define a correct and practical solution to the critical section problem. Each guards against a different kind of failure:

1. Mutual Exclusion — Correctness

This is the primary safety guarantee. At any point in time, at most one process may be inside the critical section for a given shared resource.

Process A: [entry] → [CRITICAL SECTION] → [exit]Process B: [entry] → BLOCKED → [entry] → [CRITICAL SECTION] ↑ Must wait here — mutual exclusion enforcedWithout it: Race conditions and data corruption occur, as seen in Problem 5.

2. Progress — No Deadlock

If no process is currently in the critical section, and one or more processes want to enter, then a decision about who enters next must be made in finite time. Processes stuck in their “remainder section” (the non-critical parts of their code) cannot block others from deciding.

Without it: The system could deadlock even when no one is in the critical section — all processes freeze waiting for a decision that never comes.

Example of a progress violation: A poorly written lock that checks a flag set by process A, but A is sleeping — no one enters, no one decides.

3. Bounded Waiting — No Starvation

Once a process requests entry to its critical section, there is a finite upper bound on how many times other processes are allowed to enter before this process gets its turn.

Without it: A process could wait forever — technically the system is making progress, but one unlucky process is perpetually skipped (starved).

Example: If a lock always gives preference to the process with the lowest PID, a high-PID process might never get in if lower-PID processes keep arriving.

4. Performance — Practical Efficiency

The overhead of the entry/exit protocol must be proportionally small compared to the work done inside the critical section. A lock that takes 10 ms to acquire is useless for protecting a 1 µs operation.

Considerations:

| Metric | Good Sign | Red Flag |

|---|---|---|

| Lock acquisition time | Nanoseconds | Milliseconds |

| CPU usage while waiting | Low (blocking) or brief (spinning) | 100% spin for long waits |

| Contention overhead | Scales gracefully | Degrades sharply under load |

Summary: What Each Requirement Prevents

| Requirement | Failure Mode It Prevents |

|---|---|

| Mutual Exclusion | Race conditions / data corruption |

| Progress | Deadlock (no one in CS, but no one can enter) |

| Bounded Waiting | Starvation (a process waits forever) |

| Performance | Impractical lock overhead |

Problem 7: Disabling Interrupts for Locks

Section titled “Problem 7: Disabling Interrupts for Locks”List 2 reasons why it is a bad idea to implement locks by disabling interrupts.

Instructor Solution

While disabling interrupts is a simple way to achieve mutual exclusion on a single-processor system, it is generally considered a bad idea for two primary reasons:

(1) System Responsiveness and Safety: Disabling interrupts stops the CPU from responding to external events, such as keyboard input, network packets, or even critical hardware timers. If a thread disables interrupts and then enters an infinite loop or crashes, the entire system will “freeze” because the operating system cannot regain control to preempt the failing thread, potentially leading to a total system hang.

(2) Multiprocessor Ineffectiveness: In modern multiprocessor systems, disabling interrupts only affects the local core where the instruction is executed. Other cores continue to run and can still access shared memory, meaning the “lock” fails to provide mutual exclusion across the entire system. To make it work, the hardware would need to broadcast the “disable” signal to all cores, which is an incredibly slow and inefficient operation that severely degrades performance.

Elaboration (by LLM)

How Interrupt Disabling “Works” as a Lock

On a single-core system, the OS achieves mutual exclusion by briefly disabling hardware interrupts around critical kernel sections:

cli(); // Clear Interrupt Flag — CPU ignores all hardware interrupts/* Critical Section */ // No context switch possible; we "own" the CPUsti(); // Set Interrupt Flag — interrupts re-enabledSince a preemptive context switch is triggered by a timer interrupt, disabling interrupts prevents any thread switch from occurring. This is simple and effective — for kernel code on a single core. But it has two critical flaws:

Flaw 1: System-Wide Responsiveness and Safety

When interrupts are disabled, the CPU becomes deaf to the outside world:

- The keyboard interrupt that handles your keystrokes? Ignored.

- The network card saying “packet arrived”? Ignored.

- The system timer that drives scheduling and

sleep()? Ignored. - A hardware failure signal? Ignored.

Timeline with interrupts disabled:─────────────────────────────────────────────────────Interrupt disabled ──► [thread runs] ──► [BUG: infinite loop] ↑ OS timer can't fire — system hangs FOREVERThis is a security and reliability hazard. User-space code must never be given this power. Even in kernel space, interrupt-disabled regions must be kept extremely short (a handful of instructions).

Flaw 2: Useless on Multiprocessor Systems

The cli instruction (x86 “Clear Interrupt Flag”) only affects the local core’s interrupt flag. All other cores continue executing with interrupts fully enabled:

Core 0: cli() → [Critical Section] ← interrupts disabled here onlyCore 1: [runs freely, can access shared memory!]Core 2: [runs freely, can access shared memory!]There is no mutual exclusion across cores. Thread B on Core 1 can walk right into the “protected” shared data while Thread A on Core 0 thinks it holds an exclusive lock.

The “fix” — broadcasting a “disable interrupts” message to all cores via an Inter-Processor Interrupt (IPI) — is so expensive (microseconds of bus traffic) that it negates any performance advantage and is never used in practice.

Comparison: Interrupt Disabling vs. Proper Locks

| Property | Interrupt Disabling | Mutex / Spinlock |

|---|---|---|

| Single-core correctness | ✅ Yes | ✅ Yes |

| Multi-core correctness | ❌ No | ✅ Yes |

| System responsiveness | ❌ Broken while held | ✅ Unaffected |

| User-space safe | ❌ No (privileged instruction) | ✅ Yes |

| Safe for long critical sections | ❌ Dangerous | ✅ (with blocking) |

Problem 8: Deadlock

Section titled “Problem 8: Deadlock”What’s a deadlock? How does it occur? Briefly explain.

Instructor Solution

A deadlock is a specific state in a concurrent system where a set of processes are permanently blocked because each process is holding a resource and waiting to acquire a resource held by another process in the group. When this happens, none of the involved processes can ever proceed, effectively freezing that portion of the system until external intervention occurs.

Elaboration (by LLM)

The Classic Two-Thread Deadlock

The simplest deadlock involves two threads and two locks, each acquiring them in opposite order:

pthread_mutex_t lock_A = PTHREAD_MUTEX_INITIALIZER;pthread_mutex_t lock_B = PTHREAD_MUTEX_INITIALIZER;

// Thread 1 // Thread 2pthread_mutex_lock(&lock_A); pthread_mutex_lock(&lock_B);// ... does some work ... // ... does some work ...pthread_mutex_lock(&lock_B); // BLOCKS — Thread 2 holds B pthread_mutex_lock(&lock_A); // BLOCKS — Thread 1 holds A

// Neither thread can proceed. Both wait forever.Resource Allocation Graph

A deadlock can be visualized as a cycle in a resource allocation graph:

Thread 1 ──holds──► Lock A ◄──wants── Thread 2Thread 1 ──wants──► Lock B ──holds──► Thread 2If this graph contains a cycle, deadlock either exists or is possible.

The Four Coffman Conditions

A deadlock can only occur when all four of these conditions hold simultaneously. Preventing any one of them prevents deadlock:

| Condition | Description | How to Break It |

|---|---|---|

| Mutual Exclusion | Resources cannot be shared | Use lock-free data structures |

| Hold and Wait | A process holds resources while waiting for more | Require acquiring all locks at once (all-or-nothing) |

| No Preemption | Resources cannot be forcibly taken away | Allow the OS to preempt resources |

| Circular Wait | A cycle exists in the resource-wait graph | Enforce a global lock ordering |

The Most Practical Prevention: Lock Ordering

The simplest and most widely used deadlock prevention technique is enforcing a consistent global ordering on lock acquisition. If every thread always acquires lock_A before lock_B, a cycle can never form:

// Thread 1 — always acquire A before Bpthread_mutex_lock(&lock_A); // ← always firstpthread_mutex_lock(&lock_B); // ← always second// ... work ...pthread_mutex_unlock(&lock_B);pthread_mutex_unlock(&lock_A);

// Thread 2 — same ordering!pthread_mutex_lock(&lock_A); // ← always firstpthread_mutex_lock(&lock_B); // ← always second// ... work ...pthread_mutex_unlock(&lock_B);pthread_mutex_unlock(&lock_A);Deadlock vs. Livelock vs. Starvation

These are related but distinct problems:

| Problem | Description | Threads making progress? |

|---|---|---|

| Deadlock | Threads are permanently blocked waiting for each other | ❌ No |

| Livelock | Threads keep changing state but make no actual progress (e.g., both politely “backing off” forever) | Technically yes, but effectively no |

| Starvation | A thread is perpetually denied a resource by other threads | Some threads yes, the starved one no |

Problem 9: Mutex Lock vs. Binary Semaphore

Section titled “Problem 9: Mutex Lock vs. Binary Semaphore”Compare and contrast a mutex lock to a binary semaphore in terms of purpose and ownership.

Instructor Solution

While a mutex and a binary semaphore both use a 0/1 state to manage access, they differ fundamentally in intent. A mutex is strictly a locking mechanism designed for ownership and mutual exclusion; only the thread that successfully “locked” the mutex is permitted to “unlock” it. In contrast, a binary semaphore is a signaling mechanism used for task synchronization and has no concept of ownership. Any thread (or even an interrupt handler) can “signal” (post/up) a semaphore, even if it wasn’t the one that “waited” (pend/down) on it. This allows binary semaphores to coordinate actions between different parts of a system, such as a producer notifying a consumer that data is ready, whereas a mutex is purely about protecting a shared resource from concurrent access.

| Feature | Mutex | Binary Semaphore |

|---|---|---|

| Purpose | Mutual Exclusion (Protecting Resources) | Synchronization (Signaling Events) |

| Ownership | Yes (Only the locker can unlock) | No (Any thread can signal) |

| Priority Inversion | Often includes protocols to prevent it | Susceptible (No ownership to track) |

| Usage | ”I am using this." | "The data is ready.” |

Elaboration (by LLM)

Mental Model: Two Different Metaphors

Mutex = Bathroom Key 🔑

Only the person who takes the key can return it. The key represents ownership. You lock it when you enter, unlock it when you leave. No one else can “return” your key for you.

Binary Semaphore = Traffic Light 🚦

A red/green signal. The driver that stops at red is not the one who changes it to green — the traffic controller does. There is no “owner” of the signal.

Mutex: Ownership Enforced

The key property of a mutex is that the locking thread must be the unlocking thread. Many implementations (e.g., POSIX) will return an error or invoke undefined behavior if another thread tries to unlock a mutex it didn’t lock.

// Thread Apthread_mutex_lock(&m); /* critical section */pthread_mutex_unlock(&m); // ✅ Thread A unlocks what Thread A locked

// Thread Bpthread_mutex_unlock(&m); // ❌ Undefined behavior / error — Thread B doesn't own itThis ownership model enables important features:

- Deadlock detection: The OS knows which thread holds which mutex

- Priority inheritance: If a high-priority thread waits on a mutex held by a low-priority thread, the OS can temporarily boost the low-priority thread’s priority to prevent priority inversion

Binary Semaphore: No Ownership

A binary semaphore is just an integer constrained to 0 or 1 with two atomic operations:

wait()/down()/P(): Decrement. Block if value is 0.signal()/up()/V(): Increment (up to 1). Wake a blocked thread if any.

sem_t data_ready;sem_init(&data_ready, 0, 0); // Starts at 0 (no data yet)

// Producer threadproduce_data();sem_post(&data_ready); // Signal: "data is ready" — anyone can call this

// Consumer threadsem_wait(&data_ready); // Wait for signal before consumingconsume_data();The consumer did the wait, but the producer did the signal. This cross-thread signaling is impossible with a mutex.

When to Use Which

| Scenario | Use |

|---|---|

| Protecting a shared resource (linked list, counter, file) | Mutex |

| Signaling between producer and consumer | Binary Semaphore |

| One thread waiting for another to complete a task | Binary Semaphore |

| Interrupt handler waking a thread | Binary Semaphore (ISRs can’t block, but can post) |

| Preventing concurrent access to a function | Mutex |

Problem 10: Cross-Thread Mutex Unlock

Section titled “Problem 10: Cross-Thread Mutex Unlock”Is it possible for one thread to lock a mutex lock and another thread to unlock it? Why or why not. Briefly explain.

Instructor Solution

No, in a standard implementation of a mutex, it is not possible for one thread to lock it and a different thread to unlock it. This is because a mutex is built on the principle of ownership; the thread that successfully acquires the lock “owns” the right to the critical section and is the only entity responsible for releasing it once the task is complete.

Elaboration (by LLM)

Why Ownership Is Fundamental to a Mutex

A mutex internally records the thread ID of the acquiring thread at lock time. When unlock is called, the implementation checks whether the calling thread matches the recorded owner. If not, the behavior is either an explicit error or undefined behavior:

// Conceptual internal behavior of pthread_mutex_unlock:int pthread_mutex_unlock(pthread_mutex_t *m) { if (m->owner != current_thread_id()) { return EPERM; // Error: "Operation not permitted" } m->owner = NONE; // ... wake next waiting thread ... return 0;}What Happens If You Try It

pthread_mutex_t m = PTHREAD_MUTEX_INITIALIZER;

void *thread_A(void *arg) { pthread_mutex_lock(&m); // Thread A acquires ownership printf("A locked\n"); sleep(1); // A never unlocks — ownership stays with A return NULL;}

void *thread_B(void *arg) { sleep(0.5); pthread_mutex_unlock(&m); // ❌ Thread B does not own this mutex // On Linux with PTHREAD_MUTEX_ERRORCHECK: returns EPERM // On Linux with PTHREAD_MUTEX_DEFAULT: undefined behavior return NULL;}The outcome depends on the mutex type:

| Mutex Type | Cross-thread unlock behavior |

|---|---|

PTHREAD_MUTEX_DEFAULT | Undefined behavior (may appear to “work” but corrupts state) |

PTHREAD_MUTEX_ERRORCHECK | Returns EPERM error code |

PTHREAD_MUTEX_RECURSIVE | Returns EPERM error code |

PTHREAD_MUTEX_ROBUST | Returns EPERM error code |

Why This Rule Enables Important Safety Features

The ownership constraint is not arbitrary — it enables:

Deadlock detection

The OS knows exactly which thread holds which mutex. Tooling (like pthread’s error-checking mode or debuggers) can detect cycles in the wait graph.

Priority inheritance

If a high-priority thread waits on a mutex, the OS can temporarily boost the owner thread’s priority. Without knowing the owner, this is impossible.

If You Need Cross-Thread Signaling — Use a Semaphore

If your design requires one thread to “release” a resource that was “acquired” by a different thread, a binary semaphore (Problem 9) is the correct tool. Semaphores have no ownership and explicitly support this pattern:

sem_t sem;sem_init(&sem, 0, 0); // Starts locked (0)

// Thread A — does the work, then signalsvoid *worker(void *arg) { do_work(); sem_post(&sem); // "Release" — Thread A signals even though it didn't wait return NULL;}

// Thread B — waits for signal from Avoid *waiter(void *arg) { sem_wait(&sem); // Blocks until A posts process_results(); return NULL;}Problem 11: Recursive Locking

Section titled “Problem 11: Recursive Locking”Is recursive locking possible with mutex locks? Is it possible with binary semaphores? Why or why not. Briefly explain.

Instructor Solution

Recursive locking is possible with a recursive mutex, but generally not with a binary semaphore. A recursive mutex allows the owner thread to lock the same resource multiple times without deadlocking itself, provided it performs a matching number of unlocks. A standard mutex does not allow this; if a thread tries to lock it twice, it will typically deadlock because it’s waiting for itself to release the first lock. A binary semaphore is even less suited for this because it lacks the concept of ownership; it doesn’t track which thread called “wait,” so if a thread waits on a semaphore twice, the semaphore simply sees its count hit zero and blocks the thread indefinitely, unaware that the thread already “holds” the signal.

Elaboration (by LLM)

What Is Recursive Locking?

Recursive locking occurs when a thread attempts to acquire a lock it already holds. This commonly arises in recursive functions or when a function that acquires a lock calls another function that also tries to acquire the same lock:

void process_node(Node *n) { pthread_mutex_lock(&tree_lock); // Acquire lock // ... if (n->child) { process_node(n->child); // Calls itself — tries to lock AGAIN! } pthread_mutex_unlock(&tree_lock);}Case 1: Standard (Non-Recursive) Mutex — Deadlock

A standard mutex tracks its owner, but has a lock count of exactly 0 or 1. When the same thread tries to lock it a second time:

Thread A locks mutex → success (count: 0→1, owner: A)Thread A locks mutex again → DEADLOCK Thread A is now waiting for itself to release a lock it holds. No one else can release it. The thread hangs forever.pthread_mutex_t m = PTHREAD_MUTEX_INITIALIZER; // Non-recursive by default

pthread_mutex_lock(&m); // ✅ Succeedspthread_mutex_lock(&m); // ❌ Deadlock — thread waits for itselfCase 2: Recursive Mutex — Works With a Counter

A recursive mutex extends the standard mutex with an acquisition count. Each lock increments the count; each unlock decrements it. The mutex is only truly released when the count reaches 0:

pthread_mutexattr_t attr;pthread_mutexattr_init(&attr);pthread_mutexattr_settype(&attr, PTHREAD_MUTEX_RECURSIVE);pthread_mutex_t m;pthread_mutex_init(&m, &attr);

pthread_mutex_lock(&m); // count: 0 → 1, owner: Thread Apthread_mutex_lock(&m); // count: 1 → 2, same owner — NO DEADLOCKpthread_mutex_lock(&m); // count: 2 → 3

pthread_mutex_unlock(&m); // count: 3 → 2pthread_mutex_unlock(&m); // count: 2 → 1pthread_mutex_unlock(&m); // count: 1 → 0 — mutex truly released, other threads can acquireInternal State of a Recursive Mutex

| State | Standard Mutex | Recursive Mutex |

|---|---|---|

| Stores owner thread ID | ✅ Yes | ✅ Yes |

| Stores acquisition count | ❌ No | ✅ Yes |

| Same-thread re-lock | ❌ Deadlock | ✅ Increments count |

| Release condition | One unlock | unlock count matches lock count |

Case 3: Binary Semaphore — Immediate Deadlock

A binary semaphore has no owner tracking at all. Its state is simply 0 (unavailable) or 1 (available). When any thread calls wait() and the value is 0, it blocks — regardless of whether it was the thread that previously called wait():

Thread A calls sem_wait() → value: 1→0, Thread A continuesThread A calls sem_wait() → value is 0 → BLOCK The semaphore has no idea Thread A already "holds" it. Thread A now waits for a signal that will never come. Deadlock.Binary Semaphore State Machine:

sem_wait() sem_signal() ↓ ↓[value=1] ──wait──► [value=0] ──wait──► [BLOCKED] ↑ No owner concept — blocks foreverSummary Comparison

| Standard Mutex | Recursive Mutex | Binary Semaphore | |

|---|---|---|---|

| Recursive lock by same thread | ❌ Deadlock | ✅ Supported | ❌ Deadlock |

| Ownership tracked | ✅ Yes | ✅ Yes | ❌ No |

| Acquisition count | ❌ No | ✅ Yes | ❌ No |

| Use case | Mutual exclusion | Reentrant functions | Signaling/events |

Problem 12: Purpose of Recursive Locking

Section titled “Problem 12: Purpose of Recursive Locking”What’s the purpose of recursive locking and how do you do it? Briefly explain.

Instructor Solution

The primary purpose of recursive locking (also known as reentrant locking) is to allow a thread to reacquire a lock it already holds without causing a deadlock. This is essential in complex software designs where a function that acquires a lock calls another function that also attempts to acquire that same lock—common in object-oriented programming or nested library calls. Without a recursive mutex, the second acquisition attempt would block the thread indefinitely. Internally, it keeps track of the owner thread and a recursion count; each time the owner locks it, the count increments, and each unlock decrements it. The resource is only truly released to other threads when the count returns to zero.

Elaboration (by LLM)

The Core Problem: Reentrant Functions and Nested Lock Acquisition

Recursive locking becomes necessary whenever a function that holds a lock needs to call another function that also tries to acquire the same lock. This pattern is especially common in:

- Recursive algorithms (tree traversal, graph search) where each call frame acquires the same lock

- Object-oriented designs where a public method calls another public method on the same object

- Library code where an internal helper function and its caller both use the same guard lock

Without a recursive mutex, the second lock() call from the same thread deadlocks immediately — the thread waits for itself to release the lock it already holds.

Motivating Example: Tree Traversal

pthread_mutex_t tree_lock = PTHREAD_MUTEX_INITIALIZER; // standard (non-recursive)

int sum_tree(Node *n) { pthread_mutex_lock(&tree_lock); // Lock acquired (depth 1) if (n == NULL) { pthread_mutex_unlock(&tree_lock); return 0; } int val = n->value + sum_tree(n->left) // ❌ tries to lock again — DEADLOCK + sum_tree(n->right); pthread_mutex_unlock(&tree_lock); return val;}How to Enable Recursive Locking in POSIX

You must explicitly create the mutex with the PTHREAD_MUTEX_RECURSIVE attribute:

pthread_mutex_t lock;pthread_mutexattr_t attr;

pthread_mutexattr_init(&attr);pthread_mutexattr_settype(&attr, PTHREAD_MUTEX_RECURSIVE); // Key linepthread_mutex_init(&lock, &attr);pthread_mutexattr_destroy(&attr);With this in place, the tree traversal works correctly:

pthread_mutex_t tree_lock; // initialized as RECURSIVE (see above)

int sum_tree(Node *n) { pthread_mutex_lock(&tree_lock); // depth 1: count → 1 if (n == NULL) { pthread_mutex_unlock(&tree_lock); return 0; } int val = n->value + sum_tree(n->left) // depth 2: count → 2 (no deadlock) + sum_tree(n->right); // depth 2: count → 2 pthread_mutex_unlock(&tree_lock); // count → 1 (still locked) return val; // final unlock → count → 0 (truly released)}Internal State: How the Count Works

Thread A calls lock() → owner=A, count=1Thread A calls lock() → owner=A, count=2 (same owner: just increment)Thread A calls lock() → owner=A, count=3Thread A calls unlock() → owner=A, count=2Thread A calls unlock() → owner=A, count=1Thread A calls unlock() → owner=NONE, count=0 ← truly released; Thread B can now acquireAny other thread that calls lock() while count > 0 is blocked as normal.

State Comparison: Standard vs. Recursive Mutex

| Scenario | Standard Mutex | Recursive Mutex |

|---|---|---|

| Thread A locks once | ✅ Succeeds | ✅ Succeeds |

| Thread A locks twice (same thread) | ❌ Deadlock | ✅ count → 2 |

| Thread B locks while A holds | ❌ Blocks (correct) | ❌ Blocks (correct) |

| Required unlock calls | 1 | Must match lock calls |

Problem 13: Bounded Priority Inversion

Section titled “Problem 13: Bounded Priority Inversion”Briefly explain what priority inversion is and how bounded priority inversion occurs.

Instructor Solution

Priority inversion occurs when a high-priority thread is forced to wait for a low-priority thread to release a resource (like a mutex), effectively reversing their intended importance. This “simple” inversion is unavoidable because the high-priority thread must wait for the low-priority thread to release the mutex.

Elaboration (by LLM)

What Is Priority Inversion?

In a priority-based scheduler, a high-priority thread should always be able to preempt and finish before a low-priority thread. Priority inversion breaks this guarantee: a high-priority thread ends up waiting on a low-priority thread, effectively running at the low thread’s priority for some duration.

Bounded Priority Inversion: The Simple Case

Bounded priority inversion involves exactly two threads — one high-priority (H) and one low-priority (L) — and a single shared mutex:

Time →

L: [──acquires lock──][──── critical section ────][──releases lock──] ↑H: [tries to lock — BLOCKED until L releases] ↑ [H resumes here]The inversion is called bounded because the delay imposed on H is strictly limited to the remaining duration of L’s critical section. Once L finishes and releases the lock, H can immediately acquire it and proceed. There is a clear ceiling on how long H waits.

Why It Is Considered “Unavoidable”

This form of inversion is an inherent consequence of mutual exclusion. If H needs a resource that L currently holds, H must wait — there is no way around it short of abandoning the lock entirely. The resource cannot safely be used by two threads simultaneously.

The inversion is “acceptable” precisely because it is bounded: the system designer can reason about the maximum delay H will experience based on the worst-case length of L’s critical section.

Timeline Example

| Time | Low-priority (L) | High-priority (H) | Lock State |

|---|---|---|---|

| T=0 | Acquires lock ✅ | Not started | Held by L |

| T=2 | In critical section | Arrives, tries to lock | Held by L |

| T=2 | In critical section | BLOCKED ⏸ | Held by L |

| T=6 | Releases lock, exits CS | Wakes up, acquires lock ✅ | Held by H |

| T=12 | Done | Finishes critical section | Free |

H’s delay = time L spends in the critical section after H arrives. This is bounded and predictable.

Problem 14: Unbounded Priority Inversion

Section titled “Problem 14: Unbounded Priority Inversion”Briefly explain what priority inversion is and how unbounded priority inversion occurs.

Instructor Solution

Priority inversion occurs when a high-priority thread is blocked by a low-priority thread that currently holds a required resource. Unbounded priority inversion is a severe scenario where a medium-priority thread inadvertently delays the high-priority thread for an indefinite period. This happens because the medium-priority thread does not need the locked resource and can preempt the low-priority thread (the lock-holder) to perform its own work. Since the low-priority thread is “paused” by the medium-priority task, it cannot finish its critical section or release the lock, leaving the high-priority thread stuck in a waiting state despite having the highest urgency in the system.

Elaboration (by LLM)

Setup: Three Threads, One Lock

Unbounded priority inversion requires at least three threads with distinct priorities and one mutex that M does not need:

| Thread | Priority | Needs the lock? |

|---|---|---|

| H | High | ✅ Yes |

| M | Medium | ❌ No |

| L | Low | ✅ Yes |

Step-by-Step: How It Unfolds

Step 1: L acquires the lock and enters its critical section.

L: [────── holds lock, in CS ──────►

Step 2: H wakes up and tries to acquire the lock. L holds it, so H blocks.

L: [────── holds lock, in CS ──────► H: [BLOCKED — waiting for lock]

Step 3: M wakes up. M has higher priority than L and does NOT need the lock. The scheduler preempts L and runs M instead.

L: ◄─── PREEMPTED ─── M: [──────────── running (no lock needed) ────────────► H: [BLOCKED — still waiting]

Step 4: M runs for as long as it wants. L cannot release the lock because it is not scheduled. H cannot proceed because L hasn't released the lock. H is effectively stuck at M's mercy — with NO upper bound on the wait.Timeline Diagram

L acquires lock │ H arrives, blocks M arrives, preempts L ▼ ▼ ▼──────────────────────────────────────────────────────────────► timeL: [──CS──────────────────────────────────────────────]H: [BLOCKED ···································] ← waiting indefinitelyM: [──────────────────────► ← runs freely, no lockWhy the Inversion Is “Unbounded”

In bounded priority inversion (Problem 13), H’s wait is capped by L’s critical section length. Here, M’s execution interposes between L’s lock acquisition and its release — and M can run for any arbitrary duration. If many medium-priority threads exist, or M runs a long computation, H could be delayed indefinitely. The system designer has no way to analytically bound H’s delay.

The Real-World Consequence: Mars Pathfinder (1997)

The Mars Pathfinder rover experienced exactly this bug. A low-priority meteorological data thread held a mutex. A high-priority bus management thread blocked on it. A medium-priority communications thread preempted the low-priority thread repeatedly. The high-priority thread missed its deadline, triggering the watchdog timer, which reset the entire system — causing the rover to reboot repeatedly during its mission.

Problem 15: Priority Ceiling Protocol

Section titled “Problem 15: Priority Ceiling Protocol”Briefly explain how priority ceiling protocol solves the unbounded priority inversion problem.

Instructor Solution

The Priority Ceiling Protocol (PCP) prevents unbounded priority inversion by assigning each resource a “ceiling” priority equal to the highest priority of any thread that could possibly lock it. When a thread acquires a lock, the system temporarily elevates that thread’s priority to the resource’s ceiling priority, ensuring that no medium-priority thread can preempt it. This allows the lock-holder to finish its critical section as quickly as possible and release the resource to the waiting high-priority thread. By effectively “masking” any intermediate threads that fall between the low-priority holder and the high-priority waiter, PCP ensures the inversion is strictly bounded to the time it takes to complete a single critical section.

Elaboration (by LLM)

The Key Idea: Precompute and Preempt

Priority Ceiling Protocol (PCP) is a static, proactive approach. Before runtime, each mutex is assigned a ceiling priority equal to the maximum priority of any thread that will ever lock it. The moment a thread acquires a mutex, its runtime priority is immediately raised to the ceiling — regardless of whether anyone is waiting.

Setup (offline, before runtime): Mutex X can be locked by: L (priority 1), H (priority 3) → ceiling(X) = 3 (highest of all potential lockers)

Runtime: L acquires X → L's runtime priority is boosted to 3 M (priority 2) tries to preempt L → M's priority (2) ≤ ceiling(X) (3) → M is BLOCKED L finishes CS, releases X → L's priority drops back to 1 H acquires X → H runsWhy M Cannot Preempt L

With PCP, the moment L holds the lock, its effective priority equals H’s priority (the ceiling). M, with priority 2, is strictly lower than the current effective priority of L (3). The preemptive scheduler will not switch to M because M is not the highest-priority runnable thread.

Timeline Comparison

Without PCP (unbounded inversion):

L: [acquire lock][──────CS──────]H: [BLOCKED ··········]M: [────runs freely────►H waits for M to finish before L can resume.

With PCP:

L: [acquire lock, boosted to P=3][──CS──][release]H: [runs ►M: [BLOCKED — can't preempt L]H’s wait is bounded to L’s CS duration only.

Full Three-Thread Example

| Time | L (base P=1) | M (P=2) | H (P=3) | Lock X (ceiling=3) |

|---|---|---|---|---|

| T=0 | Acquires X → effective P=3 | — | — | Held by L (P=3) |

| T=2 | In CS (P=3) | Arrives, tries to preempt | — | Held by L |

| T=2 | In CS (P=3) | Blocked (P=2 < 3) | — | Held by L |

| T=4 | In CS (P=3) | Blocked | Arrives, tries to acquire X | Held by L |

| T=4 | In CS (P=3) | Blocked | Blocked (lock held) | Held by L |

| T=6 | Releases X → P drops to 1 | Unblocked, runs | Acquires X ✅ | Held by H |

PCP vs. Priority Inheritance: Key Difference

| Property | Priority Ceiling Protocol | Priority Inheritance (P16) |

|---|---|---|

| When priority is boosted | Immediately on lock acquisition | Only when a higher-priority thread blocks |

| Priority knowledge required | Yes — must know all potential lockers | No — reacts dynamically |

| Prevents deadlock | ✅ Yes (as a side effect) | ❌ Not guaranteed |

| Implementation complexity | Higher (static analysis required) | Lower (purely reactive) |

| Blocking bound | At most one priority inversion per CS | May have chained inversions |

Problem 16: Priority Inheritance Protocol

Section titled “Problem 16: Priority Inheritance Protocol”Briefly explain how priority inheritance protocol solves the unbounded priority inversion problem.

Instructor Solution

The Priority Inheritance Protocol solves unbounded priority inversion by allowing a low-priority thread to “inherit” the priority of a higher-priority thread that is currently waiting on a resource it holds. When a high-priority thread attempts to acquire a mutex held by a low-priority thread, the system temporarily boosts the low-priority thread’s priority to match the high-priority one. This prevents medium-priority threads from preempting the lock-holder, ensuring the low-priority thread can finish its work and release the lock as quickly as possible. Once the lock is released, the thread’s priority drops back to its original level, and the high-priority thread immediately takes over.

Elaboration (by LLM)

The Key Idea: React Dynamically to Blocking

Priority Inheritance Protocol (PIP) is a reactive approach. Unlike PCP, no static analysis is required. Instead, priority is only boosted at the moment a high-priority thread actually blocks on a mutex held by a lower-priority thread.

Runtime event: H (P=3) tries to acquire mutex X, held by L (P=1) → L is blocking H → System boosts L's priority to 3 (inherits from H) → M (P=2) can no longer preempt L → L finishes CS, releases X, priority drops back to 1 → H acquires X and runsStep-by-Step Timeline

L acquires X (P=1) │ H arrives, blocks on X → L inherits P=3 │ │ M arrives → can't preempt L (L now P=3 > M's P=2) ▼ ▼ ▼────────────────────────────────────────────────────────► timeL: [CS·············│boost P=3│·········CS done][release X]H: [BLOCKED ·····················][acquires X ► runs]M: [BLOCKED until H releases or L done]Without PIP, M would preempt L (since M’s P=2 > L’s original P=1), causing unbounded delay for H. With PIP, L’s effective priority (3) exceeds M’s (2), so M cannot preempt.

Priority Propagation in Chained Blocking

PIP handles chained scenarios where multiple locks are involved. If L holds lock X and is itself waiting on lock Z held by LL (even lower priority), the inherited priority propagates through the chain:

H (P=3) waits on X held by L (P=1) → L inherits P=3L (now P=3) waits on Z held by LL (P=0) → LL inherits P=3This ensures the entire blocking chain is elevated and can proceed without medium-priority interference.

Comparison to Priority Ceiling Protocol

Priority Inheritance (reactive):

- Boost only when blocking is detected

- No need to know future lock usage

- Easier to implement incrementally

- May still allow multiple blocking events (chained inversions)

Priority Ceiling (proactive):

- Boost immediately on acquisition

- Requires static knowledge of lock users

- Also prevents deadlock

- Guarantees at most one blocking event per CS

Real-World Usage

PIP is the more commonly deployed protocol in practice because it requires no prior knowledge of which threads will use which locks:

| System | Priority Inheritance Support |

|---|---|

| Linux (POSIX) | pthread_mutexattr_setprotocol(PTHREAD_PRIO_INHERIT) |

| Windows | Implemented internally in kernel dispatcher |

| VxWorks | Default behavior for priority-aware mutexes |

| FreeRTOS | Configurable via configUSE_MUTEXES |

Problem 17: Condition Variables

Section titled “Problem 17: Condition Variables”What’s a condition variable. Why and where do you need them. Briefly explain.

Instructor Solution

A condition variable is a synchronization primitive that allows a thread to suspend its execution (sleep) until a specific condition or shared state is met. Unlike a mutex, which manages access to data, a condition variable manages the waiting for a particular event. You need them to avoid “busy-waiting” or polling, which wastes CPU cycles by repeatedly checking a condition in a loop. They are commonly used inside critical sections protected by mutex locks, where a thread inside the critical section needs to wait for a specific condition to be true and needs to release the mutex lock and sleep somewhere until the condition becomes true. After releasing the mutex lock, another thread comes in, changes the condition and “signals” or “broadcasts” to wake the sleeping thread up.

Elaboration (by LLM)

The Problem: Waiting for a Condition Inside a Critical Section

Consider a consumer thread that must wait until the shared buffer is non-empty before removing an item. A naive approach using a spinlock inside the critical section is self-defeating:

// ❌ BAD: busy-wait inside the lockpthread_mutex_lock(&mutex);while (buffer_empty()) { pthread_mutex_unlock(&mutex); // release so producer can add pthread_mutex_lock(&mutex); // re-acquire to check again // Wastes CPU; still racy between unlock and re-lock}remove_item();pthread_mutex_unlock(&mutex);This is both wasteful (burns CPU cycles) and tricky to get right. A condition variable solves this cleanly.

The Solution: Condition Variable

A condition variable (CV) provides two atomic operations:

wait(cv, mutex)— atomically releases the mutex and puts the thread to sleep. When woken, re-acquires the mutex before returning.signal(cv)— wakes one thread sleeping on the CV.broadcast(cv)— wakes all threads sleeping on the CV.

The atomicity of “release mutex + sleep” is the critical property that eliminates the race between checking the condition and going to sleep.

Canonical Pattern: Producer-Consumer

pthread_mutex_t mutex = PTHREAD_MUTEX_INITIALIZER;pthread_cond_t not_empty = PTHREAD_COND_INITIALIZER;pthread_cond_t not_full = PTHREAD_COND_INITIALIZER;

// Producervoid produce(int item) { pthread_mutex_lock(&mutex); while (buffer_full()) { // (1) check condition pthread_cond_wait(¬_full, &mutex); // (2) sleep + release mutex atomically } // (3) re-check on wake (spurious wakes!) insert(buffer, item); pthread_cond_signal(¬_empty); // (4) wake a waiting consumer pthread_mutex_unlock(&mutex);}

// Consumervoid consume() { pthread_mutex_lock(&mutex); while (buffer_empty()) { // (1) check condition pthread_cond_wait(¬_empty, &mutex); // (2) sleep + release mutex atomically } int item = remove(buffer); pthread_cond_signal(¬_full); // (4) wake a waiting producer pthread_mutex_unlock(&mutex); return item;}Why while and Not if?

The condition check on wake-up must always be a while loop, never an if:

// ❌ WRONG — vulnerable to spurious wakeupsif (buffer_empty()) pthread_cond_wait(&cv, &mutex);

// ✅ CORRECT — re-checks condition after every wakeupwhile (buffer_empty()) pthread_cond_wait(&cv, &mutex);Spurious wakeups (a thread waking up for no reason, permitted by POSIX) and the case where multiple consumers are woken by broadcast but only one item is available both require re-checking the condition after returning from wait.

The Three-Part Relationship

┌──────────────────────────────────────────────────┐│ Mutex → protects shared state ││ Condition Var → waits for / signals a change ││ Predicate → the actual boolean condition ││ (e.g., "buffer not empty") │└──────────────────────────────────────────────────┘All three must be used together. A CV without a mutexis always incorrect; a mutex without a CV forces busy-waiting.signal vs. broadcast

| Operation | Wakes | Use when |

|---|---|---|

pthread_cond_signal | One waiting thread | Exactly one thread can make progress (e.g., one item produced → one consumer) |

pthread_cond_broadcast | All waiting threads | Multiple threads might be able to proceed, or the condition is complex |

Problem 18: Priority Scheduling with Multiple Locks

Section titled “Problem 18: Priority Scheduling with Multiple Locks”Three threads T1, T2, and T3 have priorities T3 > T2 > T1. That is, T3 has the highest priority and T1 has the lowest priority. The threads execute the following code:

T1:<code sequence A>lock(X);<critical section CS>unlock(X);<code sequence B>T2:<code sequence A>lock(Y);<critical section CS>unlock(Y);<code sequence B>T3:<code sequence A>lock(X);<critical section CS>unlock(X);<code sequence B>X and Y locks are initialized to “unlocked”, i.e., they are free. Code sequence A takes 2 time units to execute, code sequence B takes 4 time units to execute, and critical section CS takes 6 time units to execute. Assume lock() and unlock() are instantaneous, and that context switching is also instantaneous. T1 arrives (starts executing) at time 0, T2 at time 4, and T3 at time 8. There is only one CPU shared by all threads.

(a) Assume that the scheduler uses a priority scheduling policy with preemption: at any time, the highest priority thread that is ready (runnable and not waiting for a lock) will run. If a thread with a higher priority than the currently running thread becomes ready, preemption will occur and the higher priority thread will start running. Diagram the execution of the three threads over time.

Instructor Solution (part a)

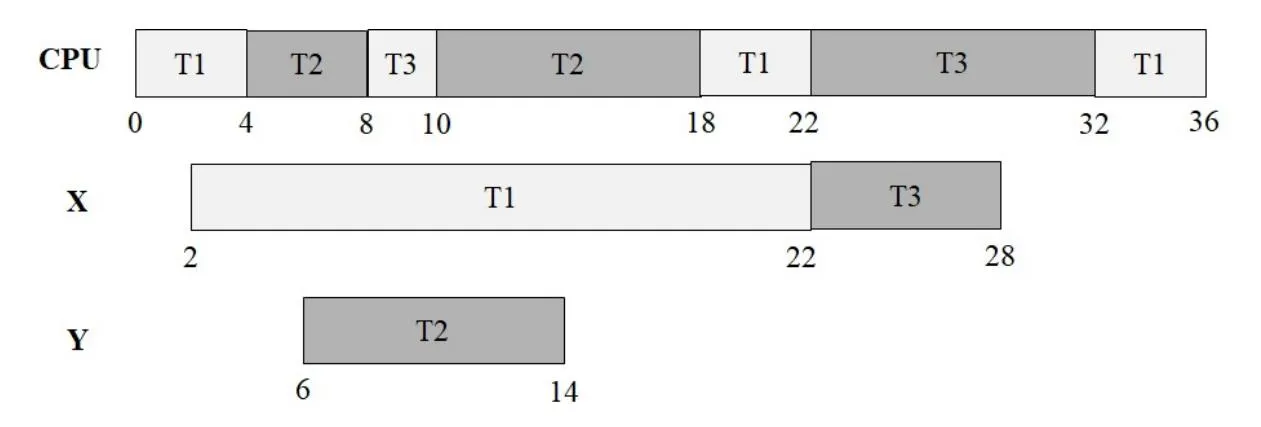

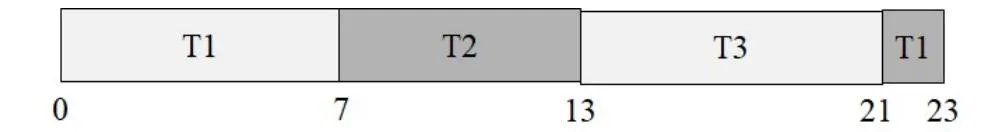

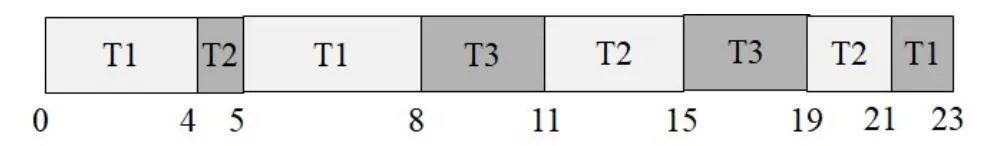

Gantt Chart(s) visualization:

CPU ┌──T1──┬───T2───┬T3┬─────T2──────┬───T1───┬──────T3──────────┬───T1──┐ 0 4 8 10 18 22 32 36

X ┌──────────────T1──────────────┬──────T3──────┐ 2 22 28

Y ┌────────T2────────┐ 6 14Elaboration (by LLM)

Tracing the Execution Step by Step

The key constraint is: the highest-priority ready (not blocked on a lock) thread always runs. Being blocked on a lock means a thread is NOT ready, even if it has high priority.

Event log:

| Time | Event | CPU | Lock X | Lock Y |

|---|---|---|---|---|

| T=0 | T1 arrives, starts running (only thread) | T1 | free | free |

| T=2 | T1 finishes seq A, acquires X | T1 | T1 | free |

| T=4 | T2 arrives (P=2 > T1’s P=1) → preempts T1 | T2 | T1 | free |

| T=6 | T2 finishes seq A, acquires Y | T2 | T1 | T2 |

| T=8 | T3 arrives (P=3 > T2’s P=2) → preempts T2 | T3 | T1 | T2 |

| T=10 | T3 finishes seq A, tries to acquire X → BLOCKED (T1 holds X) | T2 | T1 | T2 |

| T=10 | T2 is next highest ready thread → resumes CS | T2 | T1 | T2 |

| T=18 | T2 finishes CS, releases Y | T2 | T1 | free |

| T=18–22 | T2 runs seq B | T2 | T1 | free |

| T=22 | T2 finishes. T1 is only ready thread (T3 still blocked on X) → T1 resumes CS | T1 | T1 | free |

| T=28 | T1 finishes CS, releases X | T1 | free | free |

| T=28 | T3 unblocked (X now free), P=3 > T1’s P=1 → preempts T1 | T3 | T3 | free |

| T=34 | T3 finishes CS, releases X | T3 | free | free |

| T=34–38 | T3 runs seq B | T3 | free | free |

| T=38 | T3 finishes. T1 resumes seq B | T1 | free | free |

| T=42 | T1 finishes seq B. All done. | — | free | free |

Priority Inversion in Part (a)

T3 (highest priority) is blocked by T1 (lowest priority) on lock X. Meanwhile, T2 (medium priority) runs freely because it uses a different lock (Y). This is a form of bounded priority inversion: T3’s delay is bounded by how long T1 holds X — but because T2 preempted T1 earlier, T1’s time in the CS is stretched, making T3’s wait longer than it would be in isolation.

(b) “Priority inversion” is a phenomenon that occurs when a low-priority thread holds a lock, preventing a high-priority thread from running because it needs the lock. “Priority inheritance” is a technique by which a lock holder inherits the priority of the highest priority process that is also waiting on that lock. Repeat (a), but this time assuming that the scheduler employs priority inheritance. Did priority inheritance help the higher priority thread T3 finish earlier? Why or why not?

Instructor Solution (part b)

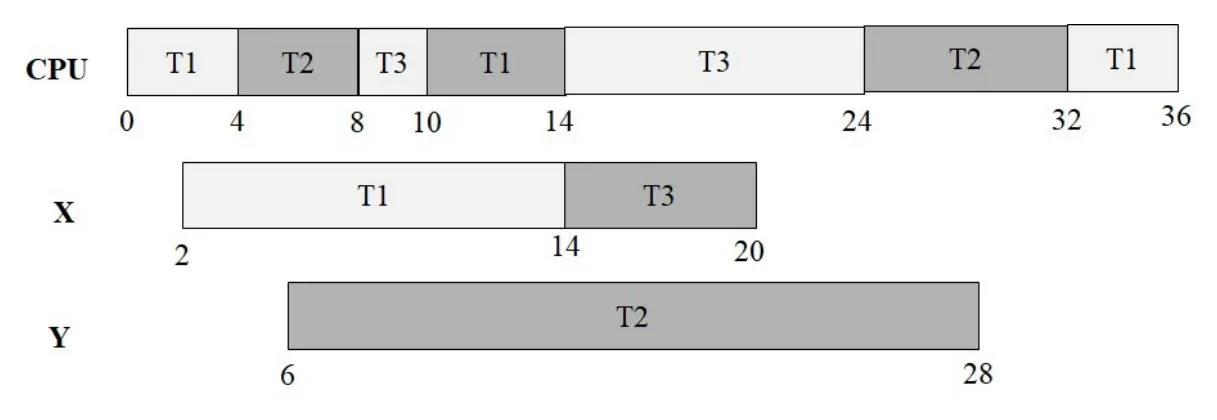

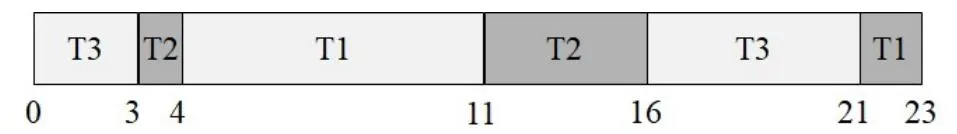

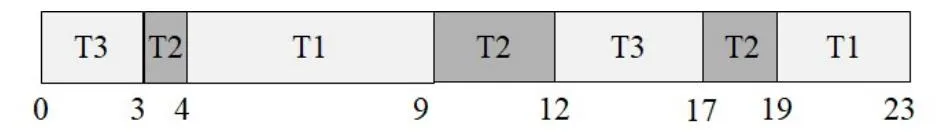

Gantt Chart(s) visualization

CPU ┌─T1─┬──T2──┬─T3─┬─T1─┬────────────T3────────────┬────T2────┬─T1─┐ 0 4 8 10 14 24 32 36

X ┌──────────────T1──────────────┬──────T3──────┐ 2 14 20

Y ┌────────────────────────T2────────────────────────┐ 6 28Yes. Priority inheritance allows T3 to finish at 24 as opposed to 32.

Elaboration (by LLM)

What Changes Under Priority Inheritance

In part (a), when T3 blocks on X (held by T1), T1 continues at its original low priority (P=1) — meaning T2 (P=2) can freely preempt T1 and run. Under Priority Inheritance, the moment T3 blocks on X, T1’s effective priority is boosted to P=3 (T3’s priority). This makes T1 higher priority than T2 (P=2), so T2 cannot preempt T1 any longer.

Step-by-Step Trace with Priority Inheritance

| Time | Event | CPU | T1 effective P | Lock X | Lock Y |

|---|---|---|---|---|---|

| T=0 | T1 arrives | T1 | 1 | free | free |

| T=2 | T1 acquires X | T1 | 1 | T1 | free |

| T=4 | T2 arrives (P=2 > T1’s P=1) → preempts | T2 | 1 | T1 | free |

| T=6 | T2 acquires Y | T2 | 1 | T1 | T2 |

| T=8 | T3 arrives (P=3) → preempts T2 | T3 | 1 | T1 | T2 |

| T=10 | T3 tries to acquire X → BLOCKED, T1 inherits P=3 | T1 | 3 | T1 | T2 |

| T=10 | T1 (now P=3) > T2 (P=2) → T1 runs, resumes CS | T1 | 3 | T1 | T2 |

| T=14 | T1 finishes CS, releases X → T1 priority drops to 1 | — | 1 | free | T2 |

| T=14 | T3 unblocked (P=3, highest ready) → acquires X, runs CS | T3 | 1 | T3 | T2 |

| T=20 | T3 finishes CS, releases X, runs seq B | T3 | 1 | free | T2 |

| T=24 | T3 finishes seq B — T3 done | — | 1 | free | T2 |

| T=24 | T2 is highest ready → runs (was in middle of CS, resumes) | T2 | 1 | free | T2 |

| T=28 | T2 finishes, releases Y, runs seq B | T2 | 1 | free | free |

| T=32 | T2 finishes seq B — T2 done | — | — | free | free |

| T=32 | T1 resumes seq B | T1 | 1 | free | free |

| T=36 | T1 finishes — T1 done | — | — | free | free |

Why T3 Finishes Earlier (24 vs. 32)

The critical difference is what happens at T=10 in each scenario:

Part (a) — No inheritance:

T3 blocks. T2 (P=2 > T1’s P=1) preempts T1 and runs its full CS (6 units) + seq B (4 units) = 10 more units before T1 can resume and finish CS. T3 is stuck until T=28 when T1 finally releases X.

Part (b) — With inheritance:

T3 blocks. T1’s priority is boosted to P=3. T2 (P=2) can no longer preempt T1. T1 immediately resumes and finishes its CS in 4 more units (T=14). T3 acquires X at T=14 and finishes its CS + seq B by T=24.

Speedup Summary

| Thread | Finish time (no inheritance) | Finish time (with inheritance) | Improvement |

|---|---|---|---|

| T3 | T=32 | T=24 | 8 units earlier |

| T2 | T=22 | T=32 | 10 units later (tradeoff) |

| T1 | T=36 | T=36 | Same |

T2 finishes later because it can no longer preempt T1 at T=10, but this is the correct tradeoff — T3’s urgency should take precedence.

Problem 19: Bounded Buffer with Producer Priority

Section titled “Problem 19: Bounded Buffer with Producer Priority”Implement a semaphore-based solution for the bounded buffer problem which adheres to the following specifications:

(1) Producers have priority over consumers. If the buffer is not full and any producer is waiting to insert an item, it has priority over any consumer.

(2) A consumer may remove an item only if there is at least one item in the buffer and no producers are waiting.

(3) Only one process may use the buffer at a time as usual.

You must implement 2 functions, Produce(int item) and Consume(). Assume a producer uses Insert(Q, item) function to insert an item to Q, and a consumer uses Remove(Q) function to remove an item from Q. Simply use these functions and do not show the details of queue manipulation. Be neat in your solution. If I do not understand what you are doing, you will not get any credit. You may use any number of POSIX mutexes, binary or counting semaphores and any other variables such as integers in your solution. Hint: This is a hybrid of two problems we have covered in class.

Instructor Solution

sem_t consumerQ = 1; // To block consumers while producers are in critical sectionmutex_t bufferMutex; // to protect shared buffermutex_t counterMutex; // to protect producerCountint producerCount = 0; // # of producers within the critical sectionsem_t full = 0; // # of full slotssem_t empty = N; // # of empty slots

Produce(item): down(empty); lock(counterMutex); producerCount++; if (producerCount == 1) down(consumerQ); // 1st producer to block consumers unlock(counterMutex); lock(bufferMutex); insert(Q, item); // Place item in Q in CS unlock(bufferMutex); lock(counterMutex); producerCount--; if (producerCount == 0) up(consumerQ); // Last producer to wake up consumers unlock(counterMutex); up(full);

Consume(): down(full); down(consumerQ); lock(bufferMutex); item = remove(Q); // Remove an item from Q in CS unlock(bufferMutex); up(consumerQ); up(empty); return item;Elaboration (by LLM)

Problem Decomposition: What Makes This Non-Standard

The classic bounded buffer (producer-consumer) problem uses symmetric semaphores. This variant adds an asymmetric priority rule: consumers are blocked entirely as long as any producer is active (waiting or inserting). This is a hybrid of:

- The bounded buffer pattern (full/empty semaphores + buffer mutex)

- The readers-writers pattern (a group-entry gate that blocks the other party while any member of the group is active)

The consumerQ semaphore acts as this gate: the first producer to arrive closes it (blocks consumers), and the last producer to leave opens it (releases consumers).

Variable Roles

| Variable | Type | Initial Value | Purpose |

|---|---|---|---|

empty | Counting semaphore | N | Tracks available empty slots; producers block here when buffer full |

full | Counting semaphore | 0 | Tracks filled slots; consumers block here when buffer empty |

bufferMutex | Mutex | unlocked | Mutual exclusion on the buffer data structure itself |

counterMutex | Mutex | unlocked | Mutual exclusion on producerCount |

consumerQ | Binary semaphore | 1 | Gate: 1=consumers may proceed, 0=consumers blocked (producer active) |

producerCount | Integer | 0 | Number of producers currently in or waiting to enter critical section |

Annotated Code Walkthrough

// ─── Shared state ───────────────────────────────────────────────sem_t consumerQ = 1; // consumer gate: open (1) initiallymutex_t bufferMutex; // guards the shared queue Qmutex_t counterMutex; // guards producerCountint producerCount = 0; // # active producers (waiting or in CS)sem_t full = 0; // slots with datasem_t empty = N; // slots available for writing

// ─── Producer ───────────────────────────────────────────────────Produce(item): down(empty); // (1) Wait for a free slot

lock(counterMutex); producerCount++; if (producerCount == 1) down(consumerQ); // (2) First producer: close gate → block consumers unlock(counterMutex);

lock(bufferMutex); insert(Q, item); // (3) Insert into buffer (exclusive access) unlock(bufferMutex);

lock(counterMutex); producerCount--; if (producerCount == 0) up(consumerQ); // (4) Last producer: open gate → allow consumers unlock(counterMutex);

up(full); // (5) Signal one more item available

// ─── Consumer ───────────────────────────────────────────────────Consume(): down(full); // (1) Wait until at least one item exists down(consumerQ); // (2) Wait until no producers are active lock(bufferMutex); item = remove(Q); // (3) Remove from buffer (exclusive access) unlock(bufferMutex); up(consumerQ); // (4) Release gate for next consumer up(empty); // (5) Signal one more empty slot return item;Why down(consumerQ) / up(consumerQ) in the Consumer?

A consumer does not hold consumerQ across the entire operation — it only needs to verify that no producers are active at the moment it enters. The sequence down → lock buffer → remove → unlock buffer → up ensures that:

- Only one consumer accesses the buffer at a time (via

bufferMutex) - The gate is re-opened immediately after, so other consumers (or checking producer status again) can proceed

Execution Trace: Producer Priority in Action

State: buffer has 2 items, 1 consumer waiting, 1 producer arrives

Consumer: down(full) ✅ (full=2→1), down(consumerQ)... waiting for valueProducer: down(empty) ✅, counterMutex: producerCount=1, down(consumerQ)=1→0 → Consumer is now blocked (consumerQ=0)Producer: inserts item, producerCount=0, up(consumerQ)=0→1 → Consumer can now proceedConsumer: down(consumerQ) ✅ (1→0), removes item, up(consumerQ) (0→1)The Readers-Writers Analogy

This pattern directly mirrors the readers-writers problem with writer priority, where writers (here: producers) block readers (consumers) as a group:

| Readers-Writers | Bounded Buffer (this problem) |

|---|---|

readerCount | producerCount |

db semaphore (blocks writers) | consumerQ semaphore (blocks consumers) |

| Reader gate mutex | counterMutex |

| Shared database | Shared buffer Q |

| Writer = exclusive access | Producer = priority access |

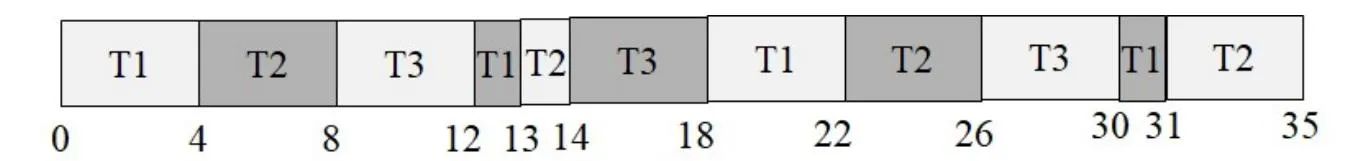

Problem 20: Process Precedence Constraints

Section titled “Problem 20: Process Precedence Constraints”Let 9 processes, p1 to p9, have the following precedence constraints. Initially, only p1 and p2 start. p3 and p4 start only after both p1 and p2 finish. p5, p6, and p7 start only when p4 finishes. p8 starts only after p3 and p5 finish. The last process, p9, starts only when p6, p7, and p8 finish. Show how these constraints can be implemented using semaphores. Appropriate wait and signal operations should be inserted at the beginning and end of the code for each process.

Instructor Solution

sem_t P1_done = 0;sem_t P2_done = 0;sem_t P3_done = 0;sem_t P4_done = 0;sem_t P5_done = 0;sem_t P6_done = 0;sem_t P7_done = 0;sem_t P8_done = 0;

P1: <execute> signal(P1_done); // Let go off P3 signal(P1_done); // Let go off P4

P2: <execute> signal(P2_done); // Let go off P3 signal(P2_done); // Let go off P4

P3: wait(P1_done); wait(P2_done); <execute> signal(P3_done); // Let go off P8

P4: wait(P1_done); wait(P2_done); <execute> signal(P4_done); // Let go off P5 signal(P4_done); // Let go off P6 signal(P4_done); // Let go off P7

P5: wait(P4_done); <execute> signal(P5_done); // Let go off P8

P6: wait(P4_done); <execute> signal(P6_done); // Let go off P9

P7: wait(P4_done); <execute> signal(P7_done); // Let go off P9

P8: wait(P3_done); wait(P5_done); <execute> signal(P8_done); // Let go off P9

P9: wait(P6_done); wait(P7_done); wait(P8_done); <execute>Elaboration (by LLM)

Understanding the Dependency Graph

Before writing any semaphore code, it helps to draw the precedence graph. Each directed edge means “must finish before the next starts”:

p1 ──┐ ┌── p5 ──┐ ├──► p3 ──┤ ├──► p8 ──┐ p2 ──┤ └── p6 ──┤ ├──► p9 │ │ │ └──► p4 ────────── ┤ │ │ └── p7 ──┘ └─────────────────────────────► (p5, p6, p7 all fan out from p4)More precisely:

p1, p2 ──► p3, p4 (fan-out: 1 process signals 2 dependents)p4 ──► p5, p6, p7 (fan-out: 1 process signals 3 dependents)p3, p5 ──► p8 (fan-in: 2 processes must both finish)p6, p7, p8 ──► p9 (fan-in: 3 processes must all finish)The Two Fundamental Semaphore Patterns

This problem is built entirely from two recurring patterns:

Fan-out (one signals many):

Process A must finish before B, C, and D all start. A signals its semaphore once per dependent:

// A<execute>signal(A_done); // for Bsignal(A_done); // for Csignal(A_done); // for D

// B, C, D each independently:wait(A_done);<execute>Because A_done is a counting semaphore, each signal increments the count by 1, and each wait decrements it — so three signals allow exactly three waiters to proceed.

Fan-in (many signal one):

Process D may only start after A, B, and C all finish. D waits on each predecessor’s semaphore:

// A, B, C each independently:<execute>signal(X_done); // one signal each

// D waits on all predecessors:wait(A_done);wait(B_done);wait(C_done);<execute>D is only unblocked once all three signals have been posted — regardless of order.

Why P1 and P2 Each Signal Twice

P3 and P4 both depend on P1 finishing. Each of them does wait(P1_done), so P1 must post P1_done twice — once to unblock P3’s wait, once to unblock P4’s wait. A counting semaphore accumulates these signals:

// P1signal(P1_done); // count: 0 → 1 (P3 can proceed)signal(P1_done); // count: 1 → 2 (P4 can proceed)

// P3wait(P1_done); // count: 2 → 1 ✅ proceeds immediately if P1 already done

// P4wait(P1_done); // count: 1 → 0 ✅ proceeds immediately if P1 already doneIf P1 hasn’t finished yet when P3 or P4 call wait, they block until P1 posts the signal. The order of arrival doesn’t matter — the semaphore handles it correctly either way.

Annotated Full Dependency Map

| Process | Waits for | Signals (how many times) | Reason |

|---|---|---|---|

| P1 | — | P1_done × 2 | P3 and P4 each need one |

| P2 | — | P2_done × 2 | P3 and P4 each need one |

| P3 | P1, P2 | P3_done × 1 | Only P8 depends on P3 |

| P4 | P1, P2 | P4_done × 3 | P5, P6, P7 each need one |

| P5 | P4 | P5_done × 1 | Only P8 depends on P5 |

| P6 | P4 | P6_done × 1 | Only P9 depends on P6 |

| P7 | P4 | P7_done × 1 | Only P9 depends on P7 |

| P8 | P3, P5 | P8_done × 1 | Only P9 depends on P8 |

| P9 | P6, P7, P8 | — | Terminal process |

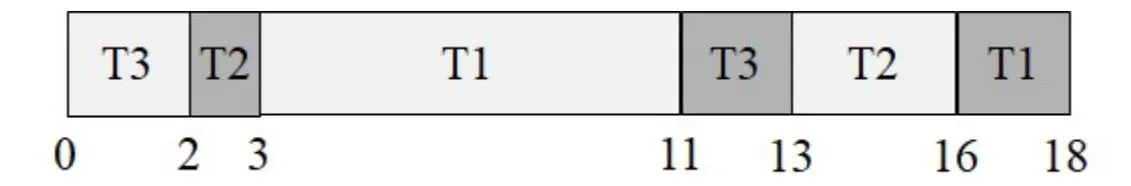

Problem 21: Thread Termination Order with Semaphores

Section titled “Problem 21: Thread Termination Order with Semaphores”Consider a process, where the main thread creates 3 additional threads T1, T2 and T3. Assume that we want the termination order of the threads to be the following: T2, T1, T3. That is, T2 terminates first, then T1 terminates and finally T3 terminates. Use binary semaphores to achieve this coordination. You must write the code for threads T1, T2 and T3. Use any number of binary semaphores. Show their initial values. The main thread terminates after T1, T2 and T3 terminate.

Instructor Solution

sem_t T1_done = 0;sem_t T2_done = 0;sem_t T3_done = 0;sem_t notify_main = 0;

T1: wait(T2_done); // Wait for T2 to be done <execute> signal(T1_done); // Let go off T3 signal(notify_main); // Notify the main thread

T2: <execute> signal(T2_done); // Let go off T1 signal(notify_main); // Notify the main thread

T3: wait(T1_done); <execute> signal(notify_main); // Notify the main thread

main: CreateThread(T1); CreateThread(T2); CreateThread(T3); for (int i=0; i<3; i++) wait(notify_main); // Wait for all 3 threads to terminate printf("Main done");Elaboration (by LLM)

Translating the Ordering Constraint

The required termination order T2 → T1 → T3 encodes two sequencing constraints:

- T1 must not terminate until T2 has terminated

- T3 must not terminate until T1 has terminated

This is a linear chain of dependencies, which maps directly to two semaphores:

T2 finishes ──► unblocks T1 ──► unblocks T3Design Decisions

Ordering semaphores (T1_done, T2_done):

Each semaphore represents a “completion event” that the next thread in the chain waits on. Initial value = 0 so the waiter always blocks until the signal is posted.

notify_main counting semaphore:

Main needs to know when all three threads are done. Rather than joining each thread individually, each thread signals notify_main once on exit, and main loops wait three times.

Thread-by-Thread Walkthrough

sem_t T2_done = 0; // T2 completion eventsem_t T1_done = 0; // T1 completion eventsem_t notify_main = 0; // counts completions for main (0→3 over runtime)

// T2 — terminates FIRST, no dependenciesT2: <execute> signal(T2_done); // unblocks T1 (T2_done: 0 → 1) signal(notify_main); // tells main T2 is done (notify_main: N → N+1)

// T1 — terminates SECOND, waits for T2T1: wait(T2_done); // blocks until T2 signals (T2_done: 1 → 0) <execute> signal(T1_done); // unblocks T3 (T1_done: 0 → 1) signal(notify_main); // tells main T1 is done

// T3 — terminates LAST, waits for T1T3: wait(T1_done); // blocks until T1 signals (T1_done: 1 → 0) <execute> signal(notify_main); // tells main T3 is done

// Main — waits for all threemain: CreateThread(T1); CreateThread(T2); CreateThread(T3); wait(notify_main); // waits for first completion wait(notify_main); // waits for second completion wait(notify_main); // waits for third completion printf("Main done\n");Execution Trace

All threads are created simultaneously. Because T1 immediately wait(T2_done) and T3 immediately wait(T1_done), only T2 can actually execute initially:

| Time | T1 | T2 | T3 | T2_done | T1_done | notify_main |

|---|---|---|---|---|---|---|

| Start | blocks on T2_done | executes | blocks on T1_done | 0 | 0 | 0 |