Homework 2 - Optimization and Kepler's Laws

This homework reviews optimization techniques including Newton’s method, explores the gradient descent proof, and applies proportional relationships to real-world astronomical data.

Problem 1: Reading and Analysis

Section titled “Problem 1: Reading and Analysis”(a) Read the article found here: https://www.quantamagazine.org/three-hundred-years-later-a-tool-from-isaac-newton-gets-an-update-20250324/. What date was this article published?

Solution

It was published on March 24, 2025.

(b) True or False? Most problems that involve finding an optimal solution boil down to minimizing a function.

Solution

True. The article states: “Mathematically, these problems get translated into a search for the minimum values of functions.”

(c) In the third paragraph, does the description of Newton’s method in optimization sound like any topic we discussed? If so, what topic? (Note that Newton’s method is sometimes called Newton-Raphson).

Solution

Yes, it sounds similar to gradient descent. Newton’s method works by using derivative information to iteratively find a better approximation of the minimum, similar to how gradient descent uses the slope to guide the search.

(d) In using Newton’s method in optimization, how many derivatives are required?

Solution

Two derivatives are required: the first derivative (slope) and the second derivative (the rate at which the slope is changing).

(e) What converges faster, Newton’s method or gradient descent? Note that the article states this answer and Wikipedia also has a nice visual: https://en.wikipedia.org/wiki/Newton%27s_method_in_optimization.

Solution

Newton’s method converges faster. It converges at a quadratic rate, while gradient descent converges at a linear rate. This means Newton’s method can identify the minimum value in fewer iterations.

(f) Given your answer above, why might someone opt to use gradient descent versus Newton’s method?

Solution

Newton’s method is more computationally expensive than gradient descent. Therefore, researchers prefer gradient descent for certain applications, such as training neural networks in machine learning, where the computational cost per iteration matters more.

(g) True or False? Ahmadi, Chaudhry and Zhang’s work “piggybacks” off previous work.

Solution

True. The article states: “Now Ahmadi, Chaudhry and Zhang have taken Nesterov’s result another step further.” Their work builds upon Yurii Nesterov’s 2021 breakthrough on approximating functions with cubic equations, extending it to work for arbitrarily many derivatives.

(h) What properties makes an equation easy to minimize?

Solution

Two properties make an equation easy to minimize: (1) The equation should be bowl-shaped or “convex,” with just one valley instead of many, so you don’t have to worry about mistaking a local valley for the global minimum. (2) If the equation can be written as a sum of squares, for example .

(i) What did Ahmadi, Chaudhry and Zhang do to the Taylor expansion?

Solution

They added a “fudge factor” to the Taylor expansion using a technique called semidefinite programming. This modification made the Taylor approximation both convex and expressible as a sum of squares, without letting it drift too far from the original function it was meant to approximate.

(j) As of 2026, which technique is our best bet for optimization? Is it Newton’s, gradient descent or Ahmadi, Chaudhry and Zhang’s technique?

Solution

As of 2026, gradient descent is still the best bet for practical applications such as self-driving cars, machine learning algorithms, and air traffic control systems. While Ahmadi, Chaudhry and Zhang’s algorithm is theoretically faster, each iteration is still more computationally expensive than gradient descent.

(k) True or False? It is possible that Ahmadi, Chaudhry and Zhang’s technique can replace gradient descent in the future.

Solution

True. If computational technology becomes more efficient in the future, making each iteration of their algorithm less computationally expensive. Ahmadi hopes that in 10 to 20 years, their algorithm will also be faster in practice, not just in theory.

Problem 2: Proof of Gradient Descent Update Formula

Section titled “Problem 2: Proof of Gradient Descent Update Formula”Recall Mitchell’s definition of machine learning. A program is said to learn from experience with respect to some class of tasks and performance measure if its performance at tasks in , as measured by , improves with experience .

(a) What is our goal in gradient descent? Specifically, if we are at then what can we say about in relation to ?

Solution

Our goal is to minimize the function. We want to be less than , meaning we want to move to a point where the function value is smaller.

(b) Geometrically, what does it mean that ?

Solution

It means we are moving from the point by number of units. The variable indicates that we are either moving to the left (if ) or to the right (if ) on the number line.

(c) Suppose is a differentiable function and we draw the tangent line to at the point . What is the equation of this tangent line? Call the equation where stands for linearization.

Solution

The equation of the tangent line is . This is the linearization of at the point .

(d) If is near , then what can we say about in relation to ?

Solution

If is near , then . The linearization is a good approximation of the function near the point .

(e) Let’s define and define . What does your answer in part (d) become?

Solution

(f) Explain why is negative.

Solution

Since we need , then by subtracting from both sides, we get . Since and is negative, must also be negative.

(g) Given your answer above, what does this imply about ?

Solution

For the inequality to hold, must always have the opposite sign of . For example, if both and were negative, their product would be positive, violating our requirement that .

(h) Why don’t we take ?

Solution

Taking directly can be an issue because the derivative may be very large, resulting in excessively large jumps between consecutive function values. This can cause us to skip over the minimum entirely.

(i) What should we take to be?

Solution

We should take to be a small number: where is a small positive scalar (like 0.01 or smaller).

(j) Substitute your answer above into . What formula do we get?

Solution

Plugging into yields:

(k) Intuitively, what does represent?

Solution

is the learning rate, which determines how big of a step we take at each iteration when updating .

Problem 3: Mitchell’s Definition and Linear Models

Section titled “Problem 3: Mitchell’s Definition and Linear Models”Recall Mitchell’s definition of machine learning. A program is said to learn from experience with respect to some class of tasks and performance measure if its performance at tasks in , as measured by , improves with experience .

Suppose we are interested in predicting a student’s score on a final exam.

For student , let:

- = hours spent studying (over the semester),

- = number of class meetings attended,

- = homework average (as a percent),

- = final exam score (from to ).

Assume we use the linear model

(a) What would the dataset, , look like?

Solution

The dataset would contain all the tuples for each of the students, where is the hours they studied, is the number of class meetings they attended, is their homework average as a percent, and is their final exam score. In table form, the columns would represent each variable, and each row would represent one student’s data:

| Hours Studied | Classes Attended | Homework Avg (%) | Exam Score |

|---|---|---|---|

| 40 | 23 | 90 | 85 |

| 30 | 20 | 85 | 80 |

| 50 | 25 | 95 | 95 |

(b) In the context of Mitchell’s definition, what is ?

Solution

The task in Mitchell’s definition is the students’ hours studied, number of classes attended, and homework average.

(c) In the context of Mitchell’s definition, what is a good way to define ?

Solution

A good way to define the performance measure is to use a metric that quantifies how well the model’s predictions match the actual exam scores. For example, we could use the mean squared error (MSE) between the predicted scores and the actual scores :

where

(d) In the context of Mitchell’s definition, what is ?

Solution

The experience is all the data points from the dataset that we use to train the model.

(e) What happens in the training step? Try to be as detailed as possible.

Solution

We start by dividing into three separate parts: a training set (80% of ), a validation set (10% of ), and a test set (10% of ). Using the training set, we try different learning rates . For each learning rate , we perform gradient descent to find the optimal parameters . For example, yields , yields , and so on until yields .

(f) What happens in the validation step?

Solution

In the validation step, we calculate the performance measure for every candidate model . We then select the parameters that yield the best performance, meaning the lowest MSE on the validation set.

(g) What happens in the test step?

Solution

In the test step, we use the best model we selected from the validation step and evaluate its performance on the test set, which contains data that the model has never seen before.

Problem 4: Kepler’s Laws of Planetary Motion

Section titled “Problem 4: Kepler’s Laws of Planetary Motion”In the house pricing example discussed in class, we made the erroneous assumption that the house price was a linear function of square footage. During office hours, a student had asked “Does linear data actually appear in real life?” Allow us to now study a different phenomenon.

For instance, , , are all proportional relationships. How can we use this definition in modeling? Suppose we see the following data of the form :

and we want to find out if this data follows a proportional relationship. What we could do is take each value of and divide by . If every quotient gives roughly the same constant , then it is reasonable to build a model of the form . Let’s investigate:

| 1 | 3.1 | 3.1 |

| 2 | 5.8 | 2.9 |

| 3 | 8.85 | 2.95 |

| 4 | 12.12 | 3.03 |

Since all of the quotients are near , then it’s perhaps reasonable to say that . With this example in mind, let’s see the following application of proportionality which was discovered approximately 400 years ago.

(a) In astronomy, the orbital period refers to the amount of time it takes for a planet to complete one orbit around the Sun. For instance, the orbital period of Earth is about 365 days since it takes the Earth 365 days to move around the Sun. Using the Internet, find out the orbital period for the following planets and fill out the chart. Please include your source by providing a link.

Solution

| Planet | Orbital Period (measured in Days) |

|---|---|

| Mercury | 88 |

| Venus | 225 |

| Earth | 365 |

| Mars | 687 |

| Jupiter | 4,333 |

| Saturn | 10,759 |

| Uranus | 30,687 |

| Neptune | 60,190 |

Source: https://spaceplace.nasa.gov/years-on-other-planets/en/

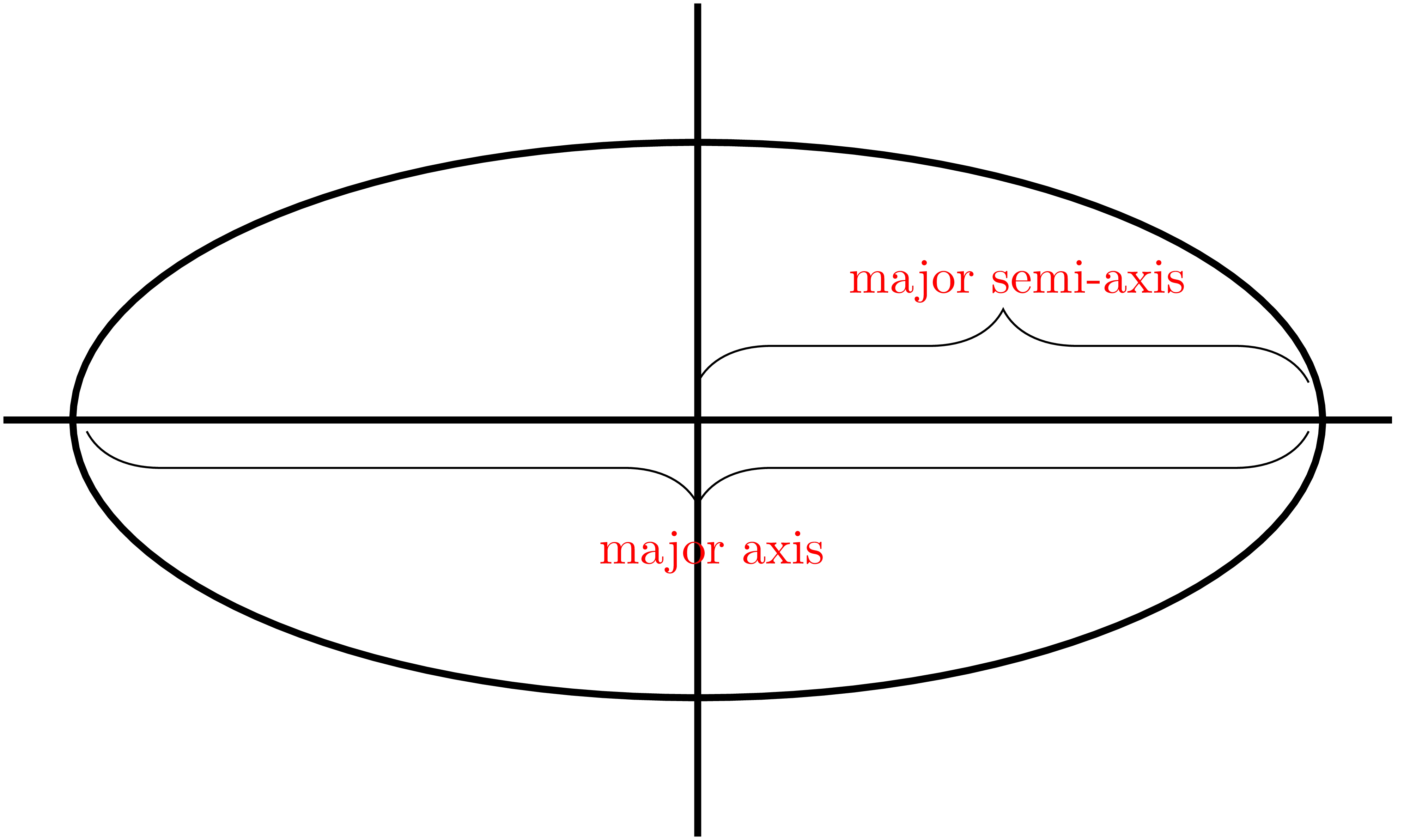

(b) Kepler’s First Law of Planetary Motion posits that each planet’s orbit about the Sun is an ellipse. Given an ellipse, consider the line segment which runs through the two foci and touches the perimeter of the ellipse. We call this line segment the major axis and half of the major axis is called the semi-major axis.

Using the Internet, find the length of the semi-major axis for each planet’s ellipse. Please include your source by providing a link.

Solution

| Planet | Major Semi-Axis (measured in AU, Astronomical Units) |

|---|---|

| Mercury | 0.387 |

| Venus | 0.723 |

| Earth | 1.0 |

| Mars | 1.524 |

| Jupiter | 5.204 |

| Saturn | 9.5826 |

| Uranus | 19.218 |

| Neptune | 30.110 |

Source: https://en.wikipedia.org/wiki/Semi-major_and_semi-minor_axes

(c) Suppose denotes the length of the major semi-axis while denotes the orbital period. Does it seem likely that ? If so then estimate the proportionality factor, . Be sure to explain your answer.

Solution

| (Semi-Major Axis) | (Orbital Period in days) | |

|---|---|---|

| 0.387 | 88 | 227.4 |

| 0.723 | 225 | 311.2 |

| 1.0 | 365 | 365.0 |

| 1.524 | 687 | 451.1 |

| 5.203 | 4,333 | 832.7 |

| 9.537 | 10,759 | 1,127.6 |

| 19.191 | 30,688 | 1,599.9 |

| 30.069 | 60,182 | 2,001.4 |

Based on the table above, does not hold because the ratios is changing by a large amount.

(d) Does it seem likely that ? If so then estimate the proportionality factor, . What does this imply?

Solution

| (Semi-Major Axis Cubed) | (Orbital Period Squared in days²) | |

|---|---|---|

| 0.0579 | 7,744 | 133,780.9 |

| 0.3777 | 50,625 | 134,126.9 |

| 1.0 | 133,225 | 133,225.0 |

| 3.5398 | 471,969 | 133,298.1 |

| 140.7644 | 18,774,889 | 133,341.4 |

| 867.6277 | 115,755,081 | 133,438.8 |

| 7,070.3024 | 941,750,144 | 133,221.7 |

| 27,181.7117 | 3,621,873,124 | 133,309.1 |

Yes, according to the table it seems that holds up well. The proportionality factor stays roughly constant at about 133,320. This means we can represent this relationship as . Even though didn’t work, a hidden relationship was found by transforming the variables.